How Navan evaluates their AI voice agent

With Sarav Bhatia, Sr. Dir. of Engineering and Alfred Gao, Engineering

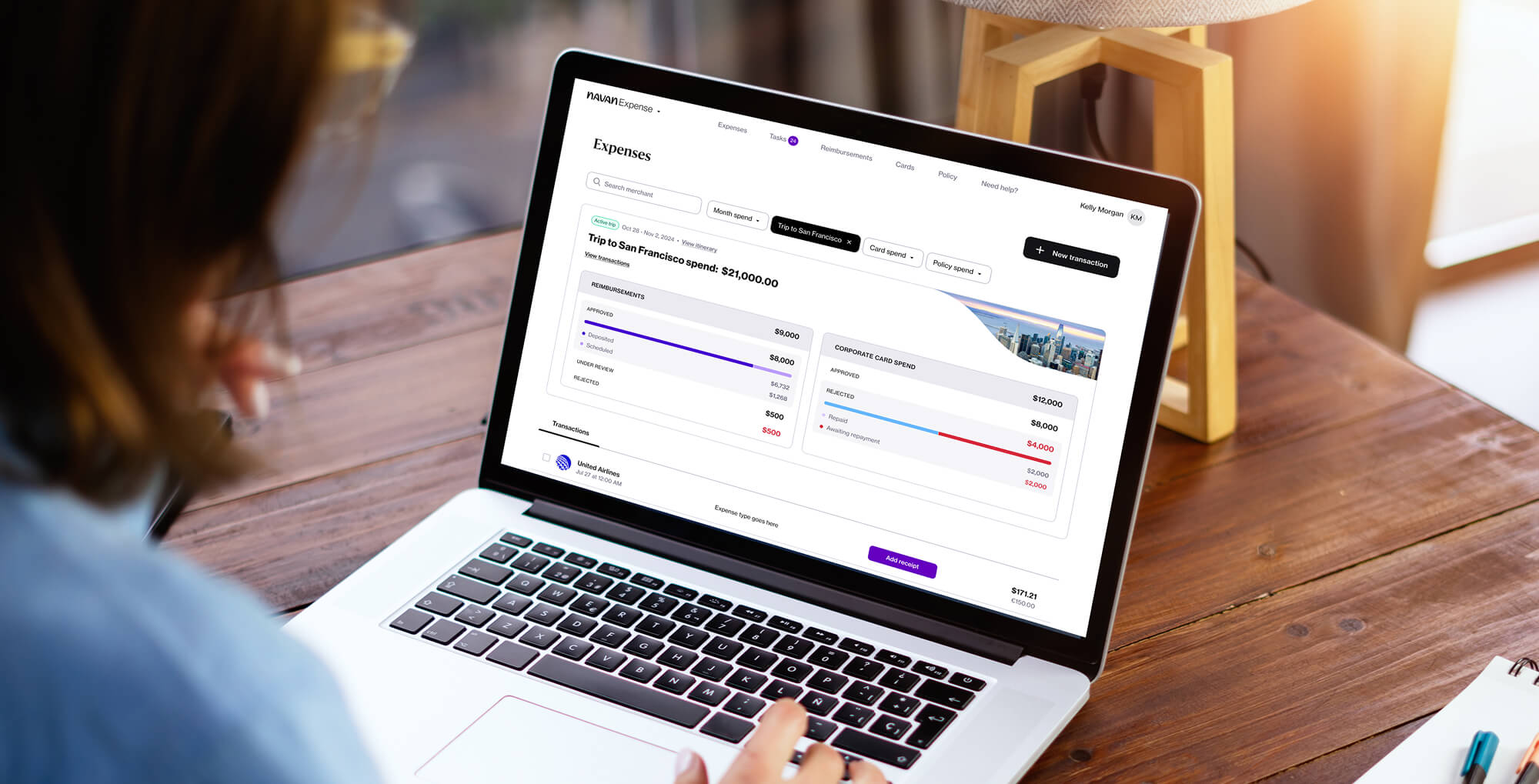

Navan is an all-in-one travel and expense management platform serving companies worldwide. Their mission is to make business travel effortless for frequent travelers, handling everything from the moment a card is swiped to when the books are closed in a traditional ERP system with zero manual effort.

When Navan needed to solve the last mile challenge of communicating payment details to millions of hotels worldwide, they built Miles, an AI voice agent that calls hotels and speaks with real humans at the front desk. The agent handles complex workflows including form filling, multilingual support, and payment confirmations. But as hundreds of calls started happening daily, the team needed to figure out how to systematically evaluate and improve quality without manually listening to every conversation.

The challenge: Solving hotel payment at scale

Hotels present a unique challenge in travel booking. While major airlines like United offer API integrations that track every change, baggage fee, and seat selection, the hotel industry has a long tail of suppliers without modern integrations. Each hotel has its own systems, policies, and processes. A traveler might want to stay at a unique bed and breakfast in Paris, and Navan wants to make that supply available, but that property likely doesn't have APIs to receive payment card details programmatically.

This creates two painful last mile problems for travelers and finance teams. First, travelers often arrive without knowing the hotel doesn't have their virtual card or credit card authorization (CCA) on file. This leads to personal card use, out-of-policy spend, and headaches for finance teams trying to reconcile it all. Second, if a traveler's flight is delayed or they arrive after hours, the hotel might cancel the booking or give away the room. A simple confirmation call could prevent both issues, but traditionally this would require hiring hundreds or thousands of agents working around the clock. Navan needed to solve this at scale using AI.

Building a voice agent

When we started to build this AI agent very quickly, what started happening was that hundreds of calls started happening on behalf of our travelers. What became very clear was that the development team, the ops team, could not hear every call to be able to learn and understand how well those calls are going.

Miles calls hotels ahead of a traveler's arrival to confirm bookings and provide payment details. The team built the AI voice agent using a combination of Google ADK, LangGraph, and OpenAI. But as Sarav Bhatia, Senior Director of Software Engineering at Navan, explains, the real challenge wasn't building the agent itself.

Rethinking the software development lifecycle

Traditional software development follows a linear path: code, test, deploy, monitor. You ship the feature and typically move on.

For AI voice agents, the real work begins after deployment. Once the code is written and the agent is live, the team enters a continuous loop: observe production calls, understand the data, create more evaluations, and refine the system. This cycle repeats constantly. The coding is straightforward, but the hard part is building systems to supervise hundreds of daily calls, prevent regressions, and create a continuous improvement process based on real-world performance.

This is where Navan partnered with Braintrust to build their evaluation loop.

Building the eval infrastructure

1. Establish the baseline and understand the data

We firstly label our voice call according to a script. It's a call set we collected from our first trial of production calls. And it gives us a very high-level structure of how we can evaluate the performance.

The team started by manually labeling voice calls according to a script collected from their first production trials. This created high-level buckets for categorizing call outcomes: success, failure, and various subcategories that require different follow-up actions. They then manually listened to the first 200 production calls to understand how user behavior and edge cases were distributed. This human effort established patterns that would later inform automated evaluation.

2. Scale with automated evaluation

Once the team understood the distribution, they turned to GPT's audio model to evaluate calls at scale. Rather than converting speech to text (which introduces transcription errors), the team at Navan passes raw audio files directly to the model. This approach has an additional benefit: the model can detect nuances like tone, semantic differences, and whether the hotel representative provided a genuine reply, details that transcripts miss.

3. Continuous improvement with minimal human effort

The team maintains a continuous loop where each batch of production calls provides real-time feedback on data distribution, allowing them to adjust their evaluation system accordingly. They keep minimal human oversight to catch long-tail cases and edge scenarios that automated systems might miss.

Evaluating the evaluators

One of Navan's most important insights was treating their evaluation system itself as something that needed rigorous testing. The team approached this by treating their eval prompt as a machine learning classifier:

Data preparation: They collected real production calls through Braintrust and split the data into training and testing sets, following standard machine learning practices.

Optimization: They optimized the evaluation system's performance using macro F1 score as their primary metric, improving from 0.56 to 0.89 through iterative refinement.

Prompt engineering with chain of thought: They added chain of thought reasoning to their prompts, making the model follow the same reasoning steps that humans use when manually listening to calls. This helps identify exactly where mistakes happen in the evaluation process.

For example, when reviewing a false positive where the eval incorrectly classified a call as successful, the team could trace the error back to the first reasoning step (a sanity check that incorrectly reported the system output as non-empty). By logging each reasoning step in Braintrust, they could debug and tune their prompts with precision.

By logging the LLM's reasoning steps, we can easily debug and triage where the bug is coming from for our LLM judge. So we can easily tune our prompt.

Using reasoning models for complex tasks: For complex evaluation tasks requiring multi-step reasoning and context changes, the team found that GPT-4o with explicit chain of thought prompting offered the best balance of performance and cost. For tasks where accuracy was the top priority, reasoning models like OpenAI's o1, which performs chain of thought natively during inference, achieved the highest performance, reaching over 0.9 F1 macro score across all control groups at the trade-off of higher latency and cost.

The trade-off is clear: reasoning models are slower and more expensive, but they provide more explainable reasoning and better performance when accuracy matters more than latency or cost.

Classification and human handoffs

Navan's evaluation system classifies each call into specific categories that drive operational workflows:

Success: The task is complete. The hotel representative acknowledges the late check-in, confirms they have the booking information and payment details, and can keep the reservation.

Failure subcategories: The system identifies specific failure modes including:

- Cannot pass IVR system

- Requires manual effort

- Agent disconnected

- Missing information

We have a dashboard that's filtered to any kind of follow-up that is needed by a human. So the ops team basically just goes ahead and processes the data that the eval has been categorized as human follow-up.

Human follow-up required: Some calls need human intervention. For example, when a hotel requests a payment link instead of accepting a virtual card directly, the evaluation system flags this for the payment operations team. These team members review a filtered dashboard in Braintrust showing only calls requiring human follow-up, allowing them to efficiently process the subset of calls that need attention.

Getting the voice right: The importance of personality

Beyond technical accuracy, Navan invested heavily in making their voice agent sound natural. Their first version sounded robotic. Hotels would hang up. Others didn't trust it.

The team experimented with different personality-driven prompts, asking the agent to try different roles: a helpful executive assistant, a support agent, a professional with urgency, and someone new to their job who was a little nervous and deferential. The slightly nervous voice worked best.

When we told it to act a little nervous, it would slow down and naturally add the right 'ums' and pauses. It felt more relatable, like someone new trying to be helpful. That's what made hotels stay on the line.

Hotel staff sometimes asked, "Are you a robot?" but they kept engaging. The goal wasn't to fool anyone, but to make the agent believable enough to have a productive conversation. The team fine-tuned latency in the initial greeting and carefully crafted the agent's personality to feel authentic. These improvements came from running A/B tests on different voice configurations and measuring outcomes systematically through Braintrust.

Results

By building a rigorous evaluation system, Navan transformed how they develop AI features. The team now:

Supervises hundreds of daily calls systematically without manual listening. Every call is logged in Braintrust, instrumented with agent behavior, reasoning steps, and intermediate data.

Prevents regressions through continuous evaluation. Each change is tested against established baselines before deployment.

Improves continuously through a tight data feedback loop. Production calls inform dataset updates, which drive eval improvements, which catch issues before they reach users.

Ships with confidence. As Sarav describes their philosophy: "Eval-driven development is the new test-driven development. Any projects that we take up, the first step is identifying the eval set."

The team achieved over 0.9 macro F1 score across all control groups for their evaluation system, ensuring that their automated quality checks match human judgment with high accuracy.

Before Braintrust

Couldn't scale beyond 200 calls

Key lessons for voice agent evaluation

Based on Navan's experience, here are the critical takeaways:

- Start with evaluation before coding: Define your eval strategy first. The coding is easy. Evaluation and iteration drive progress.

- Keep your data feedback loop tight: Production deployment is the starting point, not the finish line. Real work happens in the continuous improvement cycle.

- Evaluate your evaluators: If your eval system gives inaccurate results, nothing else works. Treat eval prompts like ML classifiers and optimize them systematically.

- Use reasoning models for complex tasks: For multi-step evaluation requiring context awareness, chain of thought prompting with GPT-4o offers the best cost-performance balance, while reasoning models like o1 deliver the highest accuracy at higher cost. 1

- Log everything: Instrument every production call with full context. You can't improve what you can't measure.

- Balance automation with human oversight: Keep minimal human effort for long-tail cases while automating the bulk of evaluation work.

Conclusion

Braintrust is the core of our evaluation framework process.

Navan demonstrates that voice AI at scale requires treating evaluation as a first-class discipline. By building a rigorous eval system, they can supervise hundreds of daily calls, continuously improve quality, and ship AI features with confidence. Looking ahead, the team is working to bring production data into their eval pipeline in real time, replaying actual production scenarios and running evaluations directly on those interactions to quickly switch models, measure before-and-after performance, and see end-to-end eval results faster.

Build evaluation systems for voice AI

Want to build a rigorous evaluation pipeline for your AI agents? Learn how Braintrust's evaluation and observability tools can help.

1 These were the best-performing models at the time of development. As model lineup continues to evolve, teams should benchmark against current options.

Read more customer stories

How Dropbox built an evaluation pipeline for AI search

“Loop was our way of getting data or synthesizing log data more efficiently at an aggregate level. We use it to find common error patterns every single week.”

“With Braintrust, our science fiction writer can sit down, see something he doesn't like, test against it very quickly, and deploy his change to production. That's pretty remarkable.”