7 best tools for debugging AI agents in production (2026)

- Braintrust - Best overall debugging platform. Evaluation-first architecture that turns production failures into permanent test cases with one click, with CI/CD quality gates that block regressions before they ship.

- LangSmith - Deep tracing and AI-powered debugging for teams building on LangChain and LangGraph.

- Langfuse - Open-source tracing and prompt management with self-hosting for teams that need data control.

- Arize Phoenix - OpenTelemetry-native tracing with embedding clustering for teams that want vendor-agnostic debugging.

- Helicone - Proxy-based observability for teams debugging cost spikes and latency issues across providers.

- Vellum - Visual workflow builder with step-by-step execution debugging for low-code agent development.

- Galileo - Automated failure detection with high-volume production safety checks.

Most agent failures do not trigger visible errors because the system still returns a successful status code even when the result is wrong. An agent may retrieve the wrong document, select the wrong tool, or pass incorrect parameters, and traditional monitoring will only show that the request completed. It does not record how the agent moved from one step to the next, which makes it difficult to trace or reproduce the issue.

Debugging an AI agent means inspecting the full execution path of a request and identifying the specific step that caused the incorrect outcome, including tools that expose model calls, tool usage, and intermediate decisions, so teams can understand what happened and prevent the same issue from reaching production again.

This guide explains how debugging AI agents differs from traditional monitoring and observability, and reviews the best tools that support the full debugging workflow, from reconstructing execution paths to preventing regressions before production.

What is AI agent debugging?

Agent debugging is the process of tracing, isolating, and resolving failures in multi-step AI agent workflows running in production. Traditional software debugging relies on stack traces and error codes, but agent failures rarely produce either. An agent might misinterpret retrieved context, call the wrong API, or hallucinate a response, all while returning a clean response to the user.

Effective agent debugging requires reconstructing the full execution path across every model call, tool invocation, and retrieval step so teams can see exactly what the agent did for a specific request. Once the full path is visible, teams identify the step that caused the incorrect behavior, reproduce the issue in a controlled environment, apply a fix, and convert the failure into a permanent evaluation case that prevents the same regression from shipping again.

A debugging workflow is incomplete without evaluation. Fixing a production issue solves the immediate problem, but only adding that failure to an automated evaluation suite ensures it won't recur. Teams that ship stable AI products connect debugging, evaluation, and CI gating into a single process so every resolved failure becomes a test that protects future releases.

Debugging vs. monitoring vs. observability

Debugging, monitoring, and observability often get used interchangeably, but they describe different levels of visibility into your agent's behavior. Understanding how debugging, monitoring, and observability differ helps you pick the right tool for the problem you are trying to solve.

| Criteria | Monitoring | Observability | Debugging |

|---|---|---|---|

| Primary question | "Is the system healthy?" | "What is happening inside the system?" | "Why did this specific request fail, and how do I fix it?" |

| Scope | Aggregate metrics like uptime, latency, error rates, and cost | Request-level traces showing the full execution path of each agent run | Step-level root-cause analysis with replay, diffing, and regression prevention |

| Typical output | Dashboards and alerts | Trace trees with inputs, outputs, and timing for every span | Isolated failure steps, reproducible test cases, and CI/CD quality gates |

| When you need it | You want to know if something is broken | You want to understand how your agent behaves across requests | You want to find exactly why a request failed and prevent it from happening again |

The 7 best tools for debugging AI agents in production (2026)

1. Braintrust

Best for: Teams scaling LLM applications who want production failures to automatically strengthen their eval suite and block bad releases before they impact customers.

Not for: Teams that only need basic request logging without evaluation workflows.

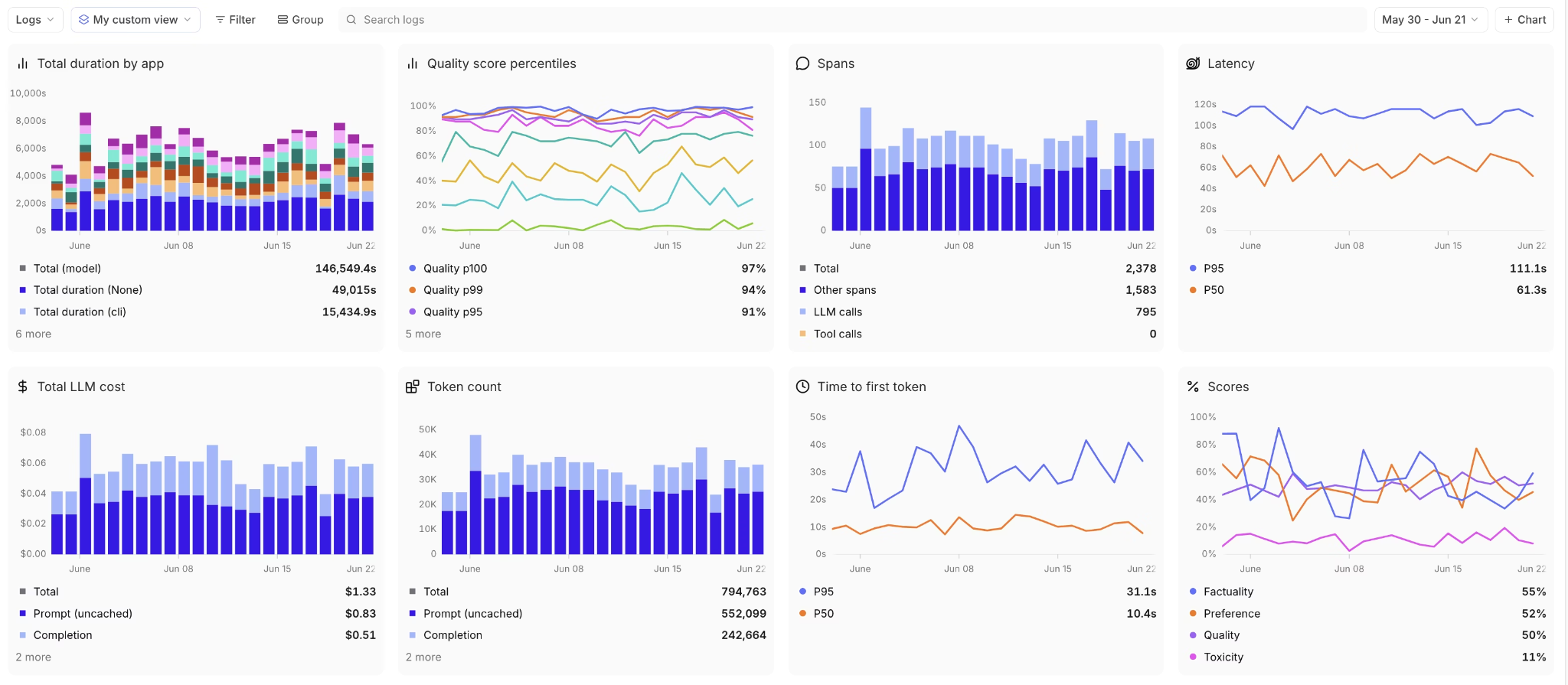

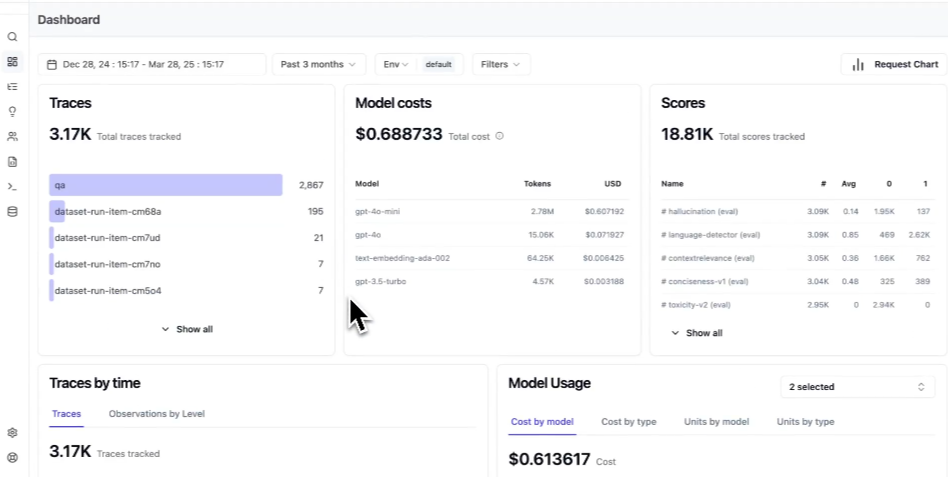

Braintrust unifies the entire agent debugging lifecycle into a single workflow, so production failures move quickly from diagnosis to prevention. Teams can trace the issue, isolate the failing step, validate a fix, convert the incident into a permanent eval case, and enforce it in CI before the next deployment. Each failure becomes a guardrail that improves reliability and reduces release risk over time.

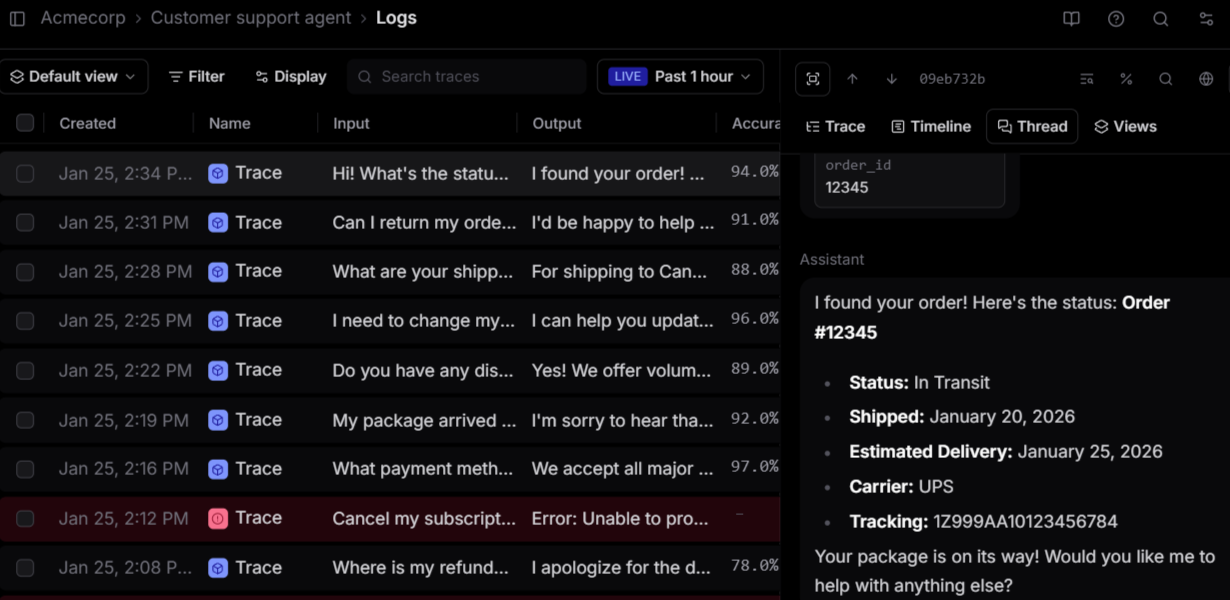

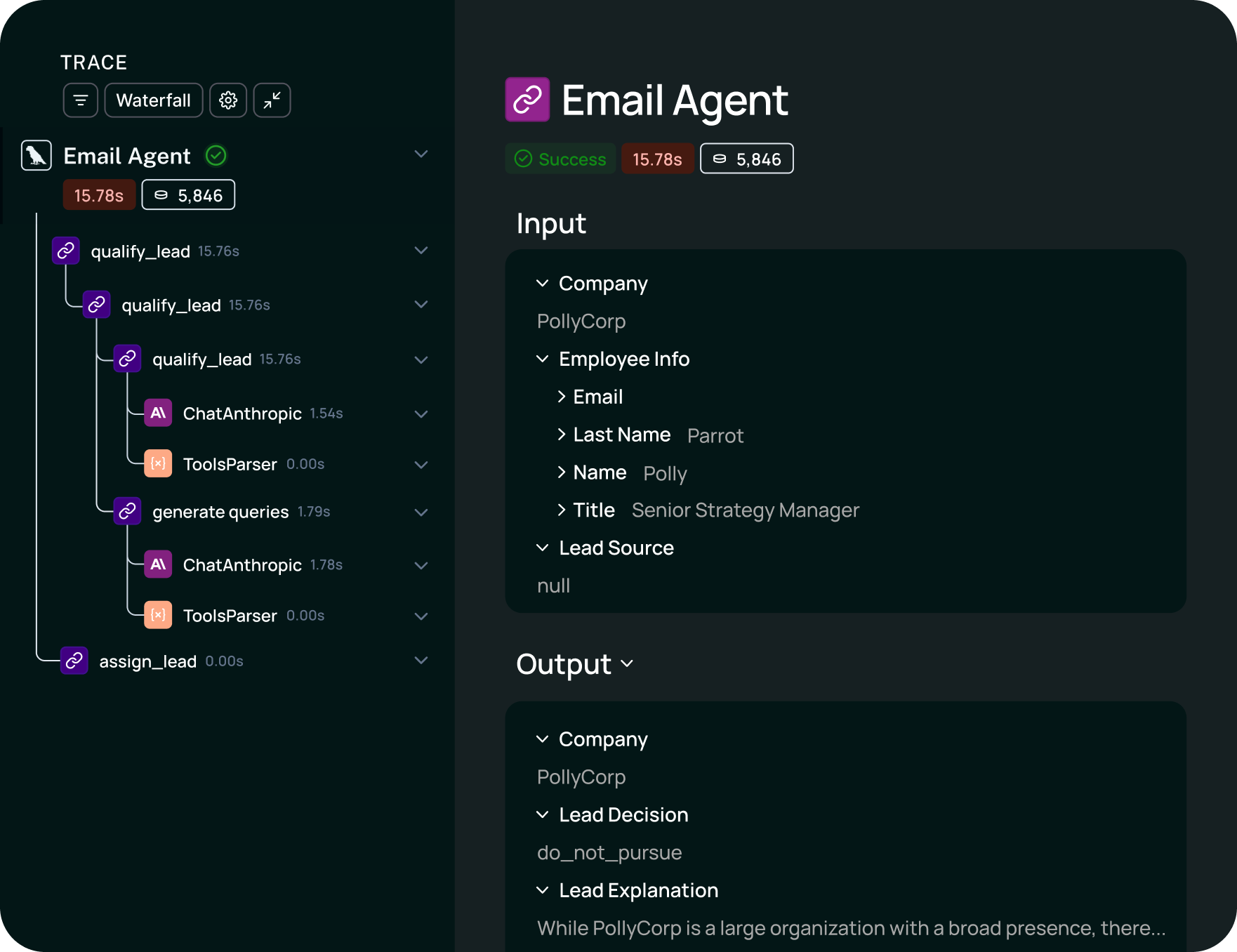

Reconstructing the full decision path

When an agent produces a bad output, you need to see every step it took to get there. Braintrust captures complete traces across model calls, tool invocations, and retrieval steps in an expandable tree of nested spans, where each span shows inputs, outputs, timing, cost, and evaluation scores.

Brainstore, Braintrust's trace query engine, is optimized for AI trace data and loads results significantly faster than general-purpose databases. Faster queries translate directly into less time spent filtering through a week of production traffic to find the failure pattern you need to isolate.

Turning failures into repeatable eval cases

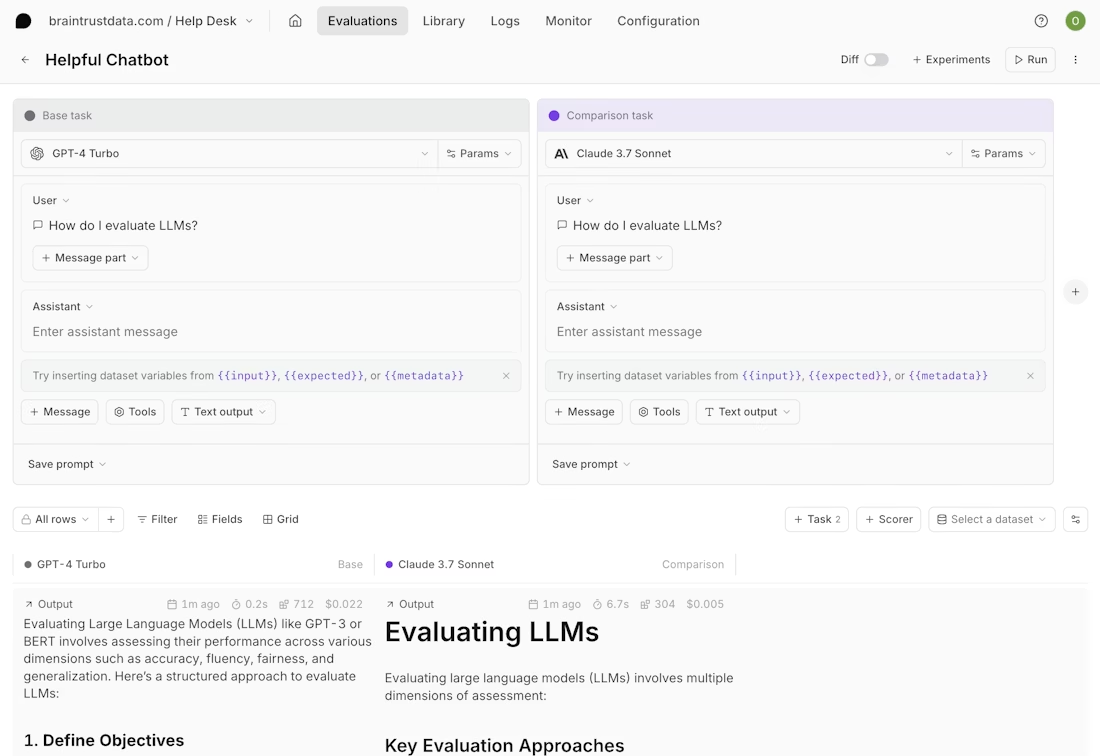

Once the failing step is identified, the trace can be loaded into Braintrust's Playground and re-run against the exact production inputs, tool calls, intermediate steps, and model configuration that triggered the failure. Unlike generic prompt tools that test prompts in isolation, the Playground preserves full execution context, so changes to prompts, parameters, routing logic, or tool responses are validated under the same real conditions. Results can be compared side by side to confirm the issue is fully resolved and does not introduce new regressions.

After fixing, add the failure with the fix as a permanent eval case to your dataset with one click. That eval case now runs on every future code change, which means the same failure cannot ship again. Without trace-to-eval conversion, teams fix individual failures but have no mechanism to prevent the same class of failure from recurring in the next deployment.

Gating deployments with CI/CD quality checks

Eval cases only prevent regressions if they actually run before code ships. Braintrust's native GitHub Action runs the full eval suite on every pull request and posts the results directly as PR comments. If quality scores drop below the configured thresholds, the merge is blocked. This automated gating replaces manual QA review for prompt and model changes, ensuring that every deployment is validated against the growing library of real production failures.

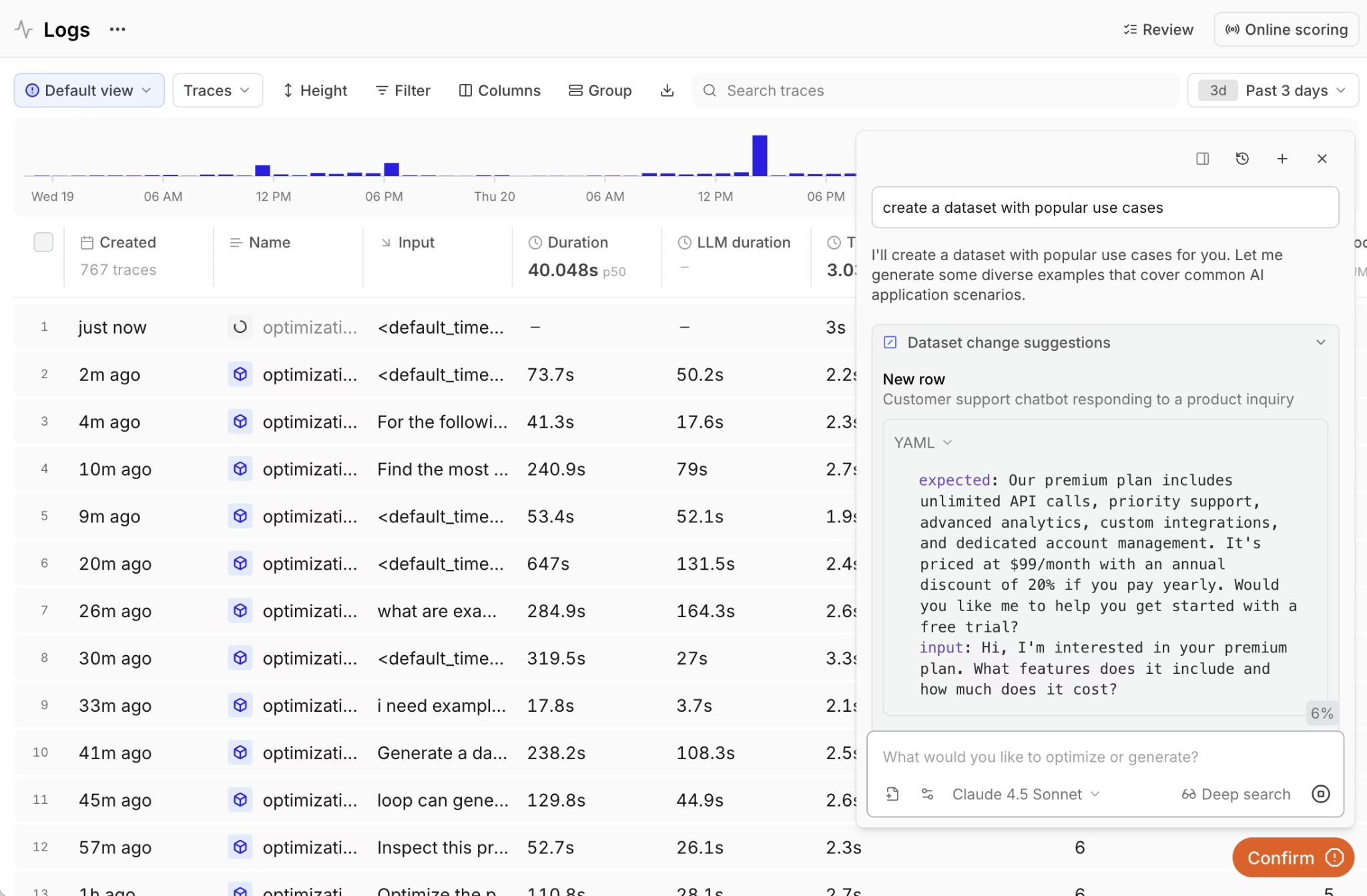

Shared debugging context for PMs and engineers

Agent failures often involve product-level decisions, where the correct agent behavior depends on business logic defined by PMs. Loop, Braintrust's AI assistant, lets anyone on the team analyze production logs with natural language, generate test datasets from real traffic, and create custom scorers from plain English descriptions. PMs and engineers can view the same traces, eval results, and quality metrics in a single workspace, eliminating the handoff overhead that slows down debugging.

Braintrust supports 40+ framework integrations, including LangChain, LlamaIndex, CrewAI, OpenAI Agents SDK, and Vercel AI SDK, along with OpenTelemetry for existing instrumentation and an AI Proxy that logs requests with zero code changes, enabling immediate production visibility without re-architecting the stack.

Braintrust's evaluation-focused debugging workflow lets teams ship agent updates faster, because every change is validated against real production failures before it reaches users.

Pros

- One-click conversion of production failures into permanent eval cases that run on every future change

- CI/CD quality gates through native GitHub Actions that block regressions before merging

- Loop AI assistant lets non-technical team members analyze traces, generate datasets, and build scorers from natural language

- Playground loads any production trace for prompt iteration with side-by-side comparison against your eval suite

- 25+ built-in scorers for accuracy, relevance, and safety, plus custom scorer support

- Unified workspace where PMs and engineers share the same debugging views, eval results, and quality metrics

Cons

- Advanced features like self-hosting require an enterprise plan

- Initial evaluation setup requires defining scorers and building a baseline dataset

Pricing

Free tier with 1M trace spans, 10K scores, and unlimited users. Pro plan at $249/month. Custom enterprise pricing available. See pricing details here.

2. LangSmith

Best for: Teams building agents on LangChain and LangGraph who want framework-aware tracing.

Not for: Teams using non-LangChain frameworks who need framework-agnostic debugging.

LangSmith provides trace visualization for agent workflows and integrates most directly with LangChain and LangGraph, while also supporting other frameworks through SDK instrumentation. It records model calls, tool usage, and intermediate steps so teams can inspect how a request was executed. LangSmith supports annotation workflows and CI integration for debugging during development, though its per-trace pricing model can increase costs for agents that generate many steps per request.

Pros

- Zero-config tracing for LangChain and LangGraph workflows

- Polly AI assistant for trace analysis and prompt suggestions

- Pairwise comparison for evaluating agent outputs

- CI/CD integration with pipeline gating on eval scores

Cons

- Debugging depth is tied to the LangChain ecosystem

- Per-trace pricing at high volumes can get expensive

Pricing

Free tier with 5K traces/month. Plus plan at $39/seat/month with 10K traces included. Custom enterprise pricing.

3. Langfuse

Best for: Teams that need open-source debugging with full self-hosting and data control.

Not for: Teams that want turnkey CI/CD quality gates and automated dataset generation without custom engineering.

Langfuse captures nested traces for agent workflows, allowing teams to inspect model calls, tool usage, and execution paths when investigating failures. Developers can instrument Python functions across different frameworks, and teams can self-host the platform to meet data residency requirements. However, turning traces into CI-enforced regression tests or automated quality gates requires additional engineering effort rather than built-in debugging workflows.

Pros

- Open-source with unrestricted self-hosting

- 50+ framework integrations with framework-agnostic tracing

- Prompt versioning with deployment labels and rollback support

- Annotation workflows with inline comments and team review

Cons

- CI/CD quality gates and automated eval pipelines require custom integration

- Self-hosting requires managing PostgreSQL, ClickHouse, and Redis infrastructure

Pricing

Free cloud plan with 50K units/month. Paid plan starts at $29/month. Self-hosting is free with all features.

4. Arize Phoenix

Best for: Teams that want OpenTelemetry-native, vendor-agnostic debugging with embedded analysis.

Not for: Teams that need a fully managed debugging platform with integrated eval workflows.

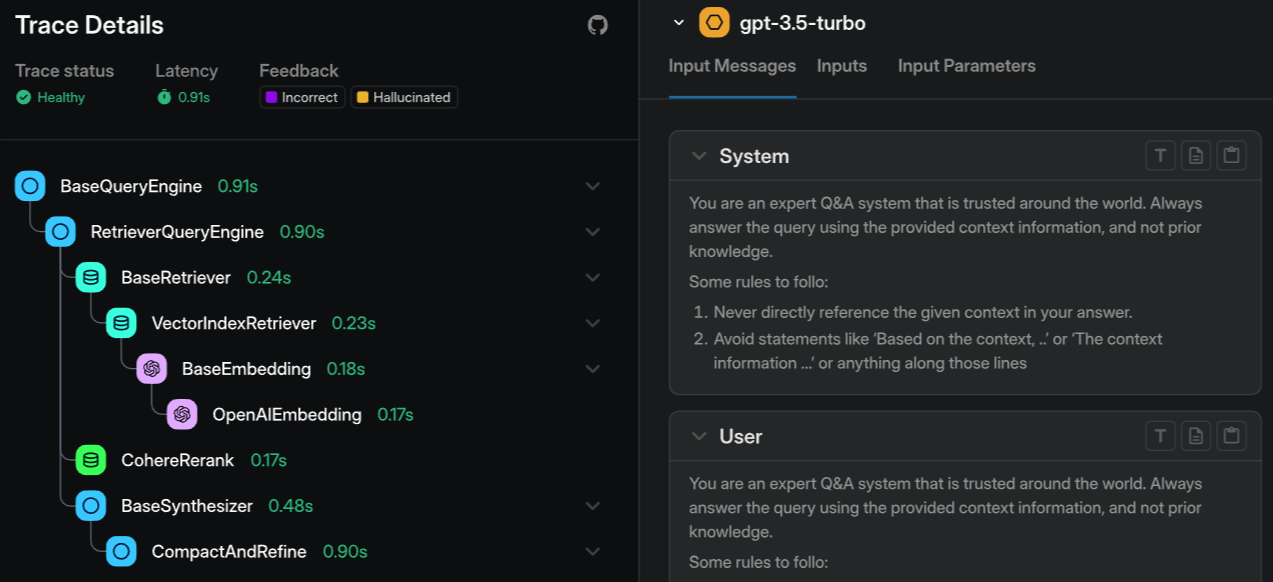

Arize Phoenix uses OpenTelemetry and the OpenInference standard for trace capture, enabling portable data across vendor-agnostic architectures. It supports replaying traces to inspect failures and includes evaluation templates to analyze model behavior during debugging. Phoenix also offers embedding clustering and drift detection to identify patterns across similar failure cases. The open-source version is fully self-hostable without any feature restrictions, while the managed cloud offering, Arize AX, provides enterprise support and additional compliance capabilities.

Pros

- OpenTelemetry-native with vendor-agnostic, portable trace data

- Embedding clustering for identifying failure patterns that text filtering misses

- Free and self-hostable with no feature restrictions

- Broad framework support through OpenInference instrumentation

Cons

- Managed cloud features require Arize AX with separate enterprise pricing

- Evaluation workflows are less integrated than eval-first platforms

Pricing

Open-source and free for self-hosting. Arize AX has a free tier with 25K spans/month, and the paid plan starts at $50/month.

5. Helicone

![]()

Best for: Teams that need fast, proxy-based debugging for cost spikes and latency issues across multiple LLM providers.

Not for: Teams that need deep agent trace reconstruction with step-level debugging and eval integration.

Helicone acts as a proxy between your application and LLM providers, logging each request and response, along with costs, latencies, and token usage. Teams enable Helicone by changing the base URL, and the platform supports many model providers through a unified API. Helicone groups related requests into sessions, which helps with multi-turn conversation debugging and traffic analysis, but it captures request-level data rather than internal agent steps. Teams that need tool-level tracing or integrated regression workflows must combine Helicone with a separate debugging system.

Pros

- One-line proxy integration with no code changes required

- Automatic cost-based routing and caching across 100+ providers

- Session tracing for multi-turn conversation debugging

- Open-source with self-hosting via Docker and Kubernetes

Cons

- Proxy architecture captures request-level data, not internal agent reasoning steps

- Limited evaluation and CI/CD integration for regression prevention

Pricing

Free plan with 10,000 requests/month. Pro plan starts at $79/month, with custom enterprise pricing available.

6. Vellum

Best for: Teams building agents with visual workflow builders that need to debug execution paths graphically.

Not for: Teams running code-first agents who need SDK-level tracing and CI/CD-integrated eval workflows.

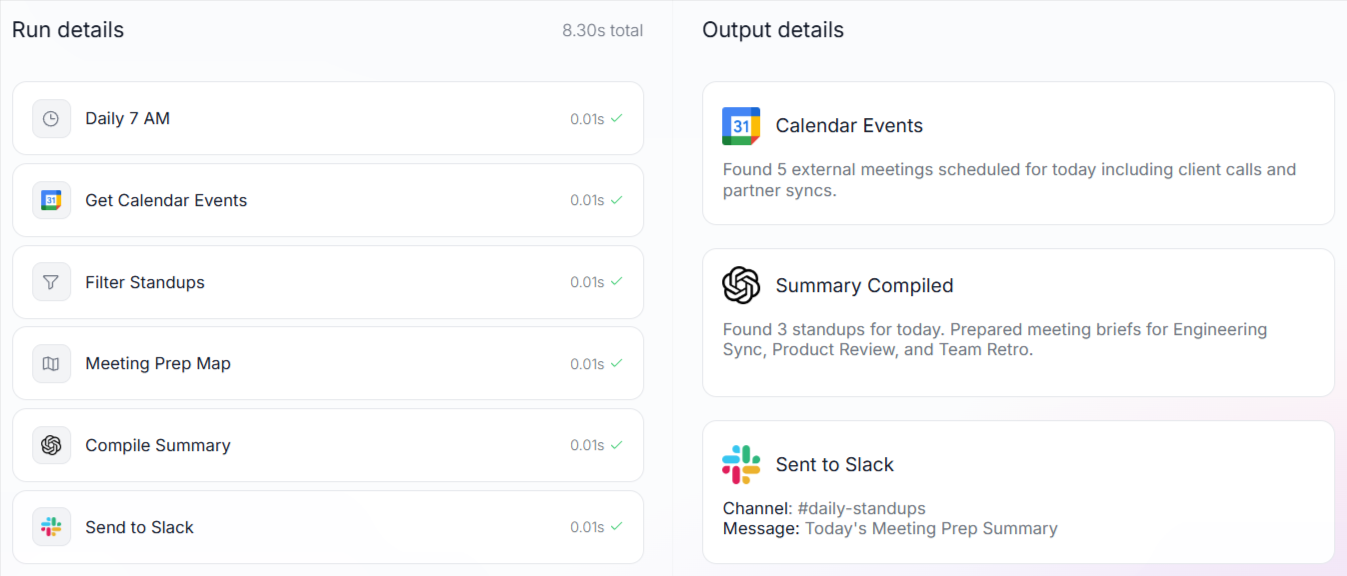

Vellum provides debugging through its graph-based workflow builder, where teams can step through each node to inspect inputs, outputs, and timing. The Workflow Sandbox supports replaying runs and mocking external integrations without calling live APIs. Vellum also includes production evaluations and A/B testing, but its debugging model centers on the visual builder, which may not suit teams that build and deploy agents primarily in code.

Pros

- The visual graph execution view shows exactly where a workflow node failed

- Workflow Sandbox replays from any step with mocked integrations

- Built-in prompt versioning and A/B testing

- Environment-based deployment with development, staging, and production isolation

Cons

- The debugging workflow is tightly coupled to Vellum's visual builder

- External monitoring and CI/CD integration options are limited compared to code-first platforms

Pricing

Free tier with 30 credits/month. Paid plan starts at $25 per month for 100 credits. Custom enterprise pricing.

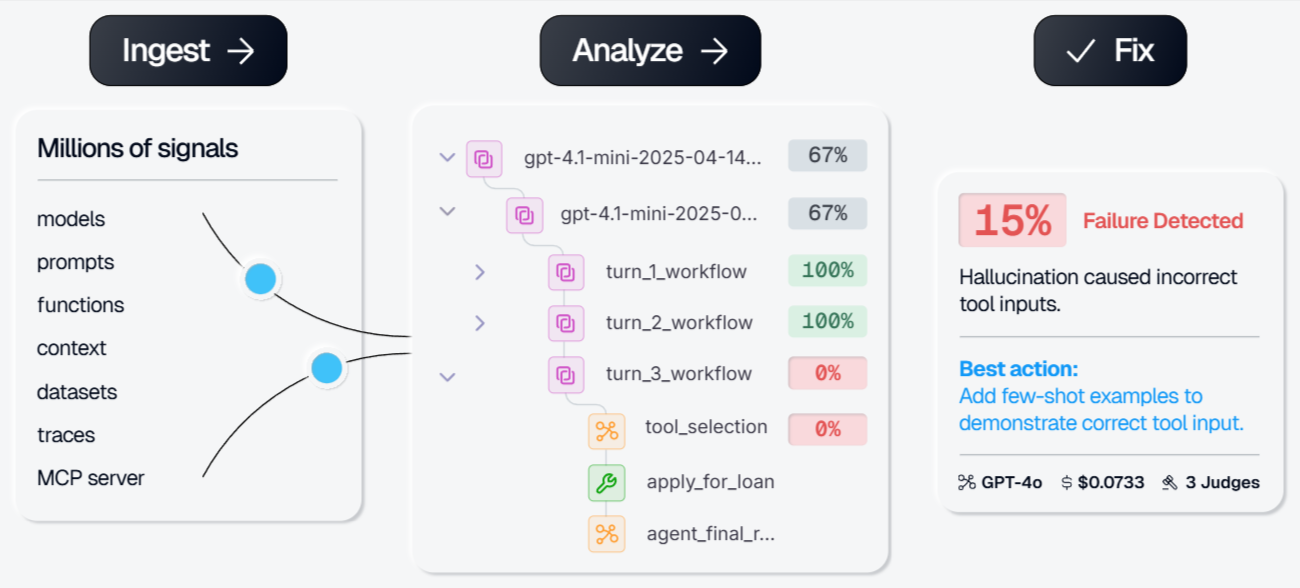

7. Galileo

Best for: Teams running high-volume agents that need automated failure detection with real-time evaluators.

Not for: Teams that want full trace replay and sandbox debugging for individual failures.

Galileo emphasizes automated failure analysis over manual trace inspection. Its Insights Engine scans production traces to detect recurring failure patterns, cluster similar issues, and suggest corrective actions. Galileo also offers Luna-2 evaluators designed for low-latency, lower-cost scoring at scale, enabling real-time evaluation across high-volume requests. While Galileo provides agent-specific metrics such as tool selection accuracy and task completion rates, it focuses more on aggregate failure detection than on step-by-step trace replay or sandbox-based debugging of individual requests.

Pros

- Insights Engine surfaces failure patterns and prescribes fixes automatically

- Agent-specific metrics for tool selection, task completion, and session success

- Eval-to-guardrail pipeline converts pre-production checks into production safety filters

- Cost-effective Luna-2 evaluators

Cons

- Less suited for individual trace replay and sandbox debugging workflows

- Evaluation logic depends on Galileo's proprietary Luna-2 models

Pricing

Free tier with 5,000 traces/month. Pro plan starts at $100/month for 50,000 traces. Custom enterprise pricing.

Comparison table: best AI agent debugging tools (2026)

| Tool | Multi-step trace reconstruction | Replay / Sandbox | Root-cause workflow | Eval integration | CI/CD quality gates | Alerting | Cross-functional collaboration | Starting price (SaaS) |

|---|---|---|---|---|---|---|---|---|

| Braintrust | Full multi-step tracing with nested spans across model calls, tools, and retrieval | Playground replays full production traces with preserved execution context | Filtering, span inspection, side-by-side comparison, Loop AI-assisted analysis | One-click trace-to-eval conversion, offline + online scoring | Native GitHub Action with merge blocking on score thresholds | Webhooks (Slack, PagerDuty), score-based monitoring | Shared trace + eval workspace for PMs and engineers | Free (1M spans, 10K scores) |

| LangSmith | Deep tracing within LangChain / LangGraph workflows | Trace replay + Playground | Polly AI analysis, trace comparison | Dataset-based evals, LLM-as-judge | CI integration via pytest/GitHub workflows | Webhook alerts | Annotation queues, review workflows | Free (5K traces) |

| Langfuse | Nested tracing across frameworks | Limited replay (prompt-focused) | Filtering, dashboards, annotations | LLM-as-judge, custom evaluators | Requires custom CI integration | Custom webhook setup | Shared dashboards + comments | Free (50K units) |

| Arize Phoenix | OpenTelemetry-based multi-step tracing | Trace replay in open-source UI | Embedding clustering, drift detection | Evaluation templates (less tightly integrated with CI) | Requires custom CI integration | Custom alerting via AX | Dashboard sharing | Free (25K spans) |

| Helicone | Request-level proxy logging (not internal agent step tracing) | Session replay for conversations | Cost and latency analytics | Limited eval support | Not native | Cost + latency alerts | Team dashboards | Free (10K requests) |

| Vellum | Visual workflow node tracing (builder-centric) | Workflow Sandbox with mocked integrations | Step-by-step node inspection | Built-in evaluations within the platform | Limited external CI integration | Workflow-level alerts | Shared visual workspace | Free (30 credits) |

| Galileo | Agent workflow tracing with automated clustering | Limited individual trace replay | Insights Engine auto-detection | Automated Luna-2 scoring | Limited native CI gating | Quality drift alerts | Dashboard sharing | Free (5K traces) |

Ready to close the debugging loop? Start debugging with Braintrust's free tier

Why Braintrust is the best choice for agent debugging

Agent debugging in production is only effective when investigating a failure directly strengthens the system that ships the next release. Braintrust integrates trace inspection, evaluation creation, and CI enforcement into a single continuous workflow, so that debugging immediately produces a lasting safeguard.

In Braintrust, a production trace that exposes a failure can be converted into a permanent evaluation case and enforced automatically through CI quality gates. Every subsequent pull request is validated against the accumulated evaluation suite, and merges are blocked when defined thresholds are not met. This trace-to-evaluation workflow ensures that each resolved issue becomes a regression test tied directly to deployment controls, allowing teams to scale agent complexity without repeatedly fixing the same classes of failures.

Production teams at Notion, Stripe, Cloudflare, Replit, and Zapier run their agent debugging workflows through Braintrust. Start debugging AI agents for free with Braintrust

How we chose the best agent debugging tools

We evaluated all the listed tools against the criteria below, which reflect the full agent debugging workflow from failure detection to regression prevention.

Agent trace reconstruction: The tool must capture multi-step execution traces across model calls, tool invocations, and retrieval steps, with complete input and output context for each span so teams can see exactly what happened during a request.

Replay and sandboxing: The tool should allow teams to load a production trace into a controlled environment, adjust prompts or configurations, and rerun the scenario to verify a fix without affecting live systems.

Root-cause workflow: The tool should support filtering, clustering, and running comparisons so teams can isolate the failing step and understand how it differs from successful runs.

Evals integration: The tool must allow teams to convert production failures into permanent evaluation cases and run regression checks across offline datasets and live traffic.

CI/CD quality gates: The tool should integrate with systems such as GitHub to block merges when evaluation scores fall below defined thresholds.

Alerting: The tool should support alerts for latency increases, quality drops, or cost spikes.

Collaboration: Engineers and product teams should be able to review the same traces, evaluation results, and debugging context within a shared workspace.

AI agent debugging FAQs

What is AI agent debugging?

AI agent debugging is the process of identifying, reproducing, and fixing failures in multi-step agent workflows running in production. Unlike traditional software, agents can return a valid response while making incorrect decisions across model calls, tool invocations, or retrieval steps. Debugging requires reconstructing the full execution path, isolating the step where the behavior went wrong, verifying the fix in a controlled environment, and converting the failure into a permanent evaluation case so it cannot reappear in future releases.

How is agent debugging different from LLM observability?

LLM observability captures trace data showing what happened during a request, including inputs, outputs, timing, and costs for each step. Agent debugging uses that trace data to determine why the request failed and how to prevent it from failing again. While observability captures what happened during a request, debugging adds structured workflows for reproducing failures, validating fixes, turning failures into evaluation cases, and enforcing quality gates through CI/CD so regressions do not ship.

How do I choose the right agent debugging tool?

Choose a tool that supports the full debugging lifecycle rather than only trace inspection. A strong debugging platform lets you analyze step-level decisions, replay failures in a sandbox, convert real production failures into evaluation datasets, and automatically validate every code change through CI/CD. Braintrust provides a complete debugging workflow in a single platform, with native GitHub Actions, one-click trace-to-eval conversion, and shared views for engineers and product managers working from the same data.

Is Braintrust better than LangSmith for debugging agents?

Braintrust is the stronger option for teams that want debugging tightly connected to evaluation and CI/CD enforcement across multiple frameworks. It supports 40+ integrations, converts production traces into evaluation cases with a single click, and runs automated evaluations on every pull request via its native GitHub Action. LangSmith offers a streamlined debugging experience within the LangChain and LangGraph ecosystem, especially for teams building exclusively on those frameworks. Teams using diverse stacks or requiring eval-driven release gates typically benefit more from Braintrust's integrated workflow.

How do I get started with agent debugging?

The fastest way to get started is to instrument your agents with tracing so you can see every step of their execution, then build a small eval dataset from real production failures. With Braintrust, you can begin capturing traces in minutes using the SDK or AI Proxy, then enable CI evaluation through the native GitHub Action so every pull request runs against your regression suite. Braintrust's free tier includes 1 million trace spans and 10,000 evaluation scores per month, which is enough to instrument a production agent, investigate failures, and establish CI quality gates from the first release.