6 best LLM gateways for developers in 2026

LLM gateways sit between your application and multiple model providers, giving developers a single API to call across models without rewriting integration code for each provider. The best LLM gateways handle routing, failover, caching, and cost tracking so engineering teams can spend less time maintaining provider SDKs and more time building product features. Braintrust Gateway combines a unified API with built-in observability, evaluation, and encrypted caching, making it easier to manage multi-model development and production workflows in a single system. Start free with Braintrust Gateway.

What is an LLM gateway and why do you need one

An LLM gateway is an infrastructure layer that routes API requests from your application to one or more model providers through a single endpoint. Instead of integrating each provider's SDK separately, developers can point existing code to the gateway URL and switch between supported models by changing a single parameter.

An LLM gateway becomes useful once an application depends on multiple models in production. A team using GPT for summarization and Claude for code generation would otherwise need to manage two SDKs, two authentication setups, and two billing systems. An LLM gateway provides the application with a single integration point for working across providers.

6 best LLM gateways for developers in 2026

1. Braintrust Gateway

Braintrust Gateway provides a unified API for routing requests to models from OpenAI, Anthropic, Google, AWS Bedrock, Vertex AI, Azure, Mistral, and other providers. Developers can point existing SDKs to the gateway URL and call supported models with a single API key.

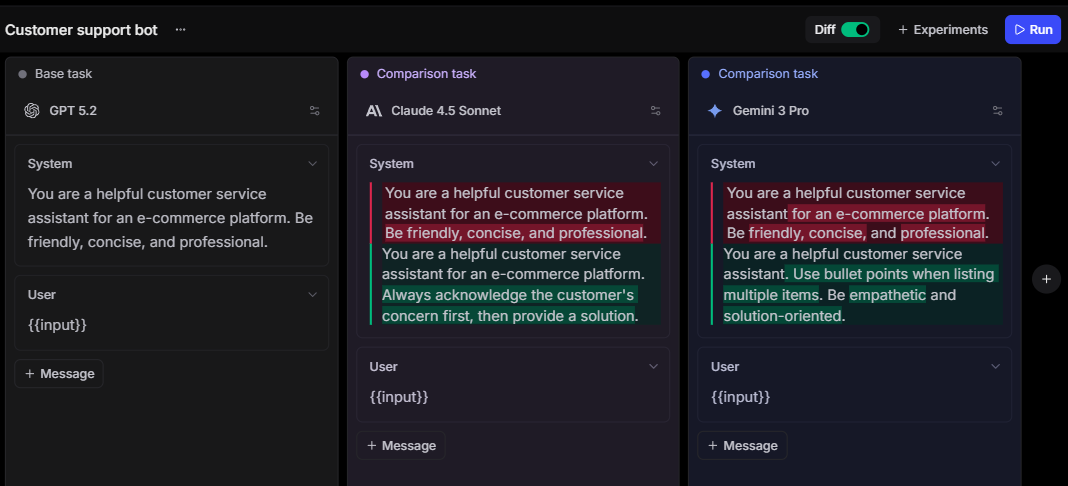

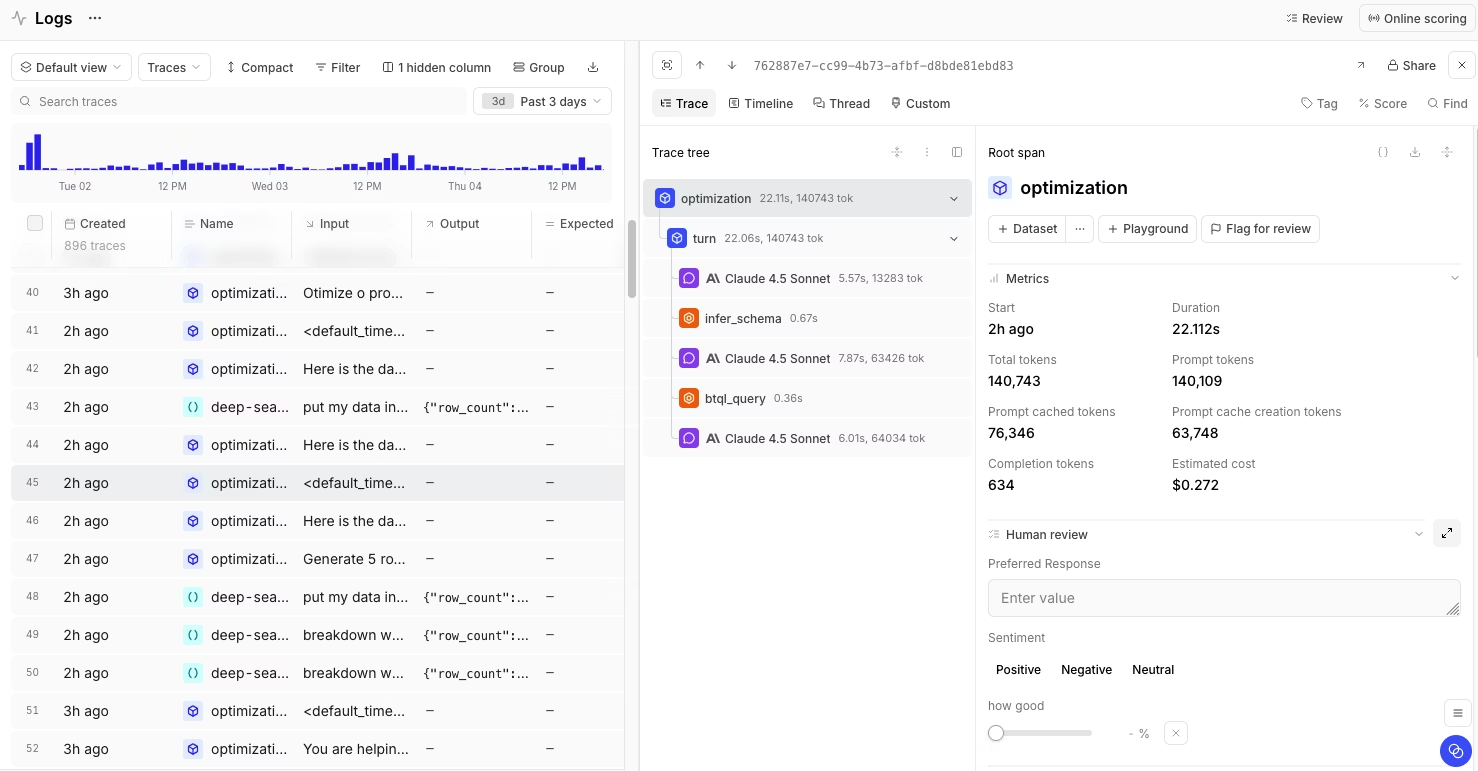

Braintrust Gateway stands out from standalone routers by connecting directly to Braintrust's observability and evaluation platform. Requests that flow through the gateway automatically feed into Braintrust's tracing and evaluation pipeline, and developers can run evaluations against production traffic, compare model performance across experiments, and catch regressions in CI/CD before they reach users. The unified approach between routing and quality assurance eliminates the need to stitch together separate gateway, logging, and testing tools.

Teams can standardize on one SDK and still call models from other providers without importing additional libraries. A team using the OpenAI SDK can call Claude or Gemini models, and the same cross-provider flexibility applies across other supported SDKs. Every request still flows through Braintrust's tracing system, which keeps integration simpler across the codebase while preserving full visibility into each request.

Caching in Braintrust Gateway uses AES-GCM encryption tied to each user's API key, so cached responses stay private by default. Developers configure caching behavior per request with headers for cache mode, TTL, and cache control. For teams iterating on prompts during development or running repeated evaluations, caching cuts both latency and cost without requiring any external cache infrastructure.

Best for: Developers and engineering teams building production AI applications who need a unified LLM gateway connected to evaluation, tracing, and quality monitoring in one platform.

Pros:

- Unified API compatible with OpenAI, Anthropic, and Google SDKs, with full cross-provider SDK support

- Gateway requests flow directly into Braintrust's tracing and evaluation workflows

- Supports evaluation on production traffic and regression checks in CI/CD

- Encrypted response caching with configurable TTL and per-request cache control headers

- Supports custom providers and self-hosted models through configurable endpoints

- Free to use during beta with production-grade uptime tracking

Cons:

- Braintrust Gateway is currently in beta

- Self-hosting requires an enterprise plan

Pricing: The LLM gateway is free during beta, with a generous free plan that includes 1M trace spans and 10K evaluation scores. See pricing details.

2. OpenRouter

OpenRouter is a managed API gateway that provides access to models from providers such as OpenAI, Anthropic, Google, and Meta via a single OpenAI-compatible endpoint. OpenRouter uses a prepaid credit system that covers all providers under a single account and charges per token, with no monthly subscription fees.

Best for: Individual developers and small teams who want fast access to a large catalog of models without managing provider accounts individually.

Pros:

- Access to 500+ models across 60+ providers through one API key

- Pay-per-token billing with no monthly subscription

- Routing variants for speed and cost

- Automatic provider fallbacks during outages

Cons:

- No self-hosting option for teams with data residency requirements

- No built-in observability, evaluation, or tracing tooling

- Limited governance controls for team-based access and budget management

Pricing: Pay-as-you-go with prepaid credits. Model pricing passes through provider rates. Free models available with rate limits.

3. LiteLLM

LiteLLM is an open-source Python SDK and proxy server that translates requests across LLM providers into an OpenAI-compatible format. Teams can self-host the proxy and manage routing, spend tracking, and access control in their own environment. LiteLLM also supports integration with Braintrust for logging and observability workflows.

Best for: Engineering teams with DevOps capabilities who need a self-hosted gateway with full infrastructure control.

Pros:

- Open-source and self-hosted

- Supports 100+ models across all major providers

- Per-user and per-project budget tracking with virtual API keys

- Supports integrations with Braintrust, Langfuse, and other logging tools

Cons:

- Requires Redis and PostgreSQL for production deployments, adding operational overhead

- Observability and evaluation features depend entirely on third-party integrations

- SSO, guardrails, and audit logs require an enterprise plan

Pricing: Free and open-source for self-hosted use. Custom enterprise plans.

4. Helicone

![]()

Helicone is an open-source LLM gateway and observability platform for LLM applications. Helicone combines request routing with logging, cost tracking, and monitoring, which makes it a fit for teams that want gateway and observability functions in a single platform.

Best for: Teams that want an open-source LLM gateway with built-in request logging and usage analytics without setting up separate monitoring infrastructure.

Pros:

- Open-source with both cloud-hosted and self-hosted deployment options

- Automatic request logging and cost tracking on every request

- Includes rate limiting, caching, and failover features in the gateway layer

Cons:

- Evaluation and scoring capabilities are limited

- Multi-step session tracking requires custom headers

- Cloud pricing scales with request volume and storage

Pricing: Free tier with 10K requests/month. Paid plan starts at $79/month.

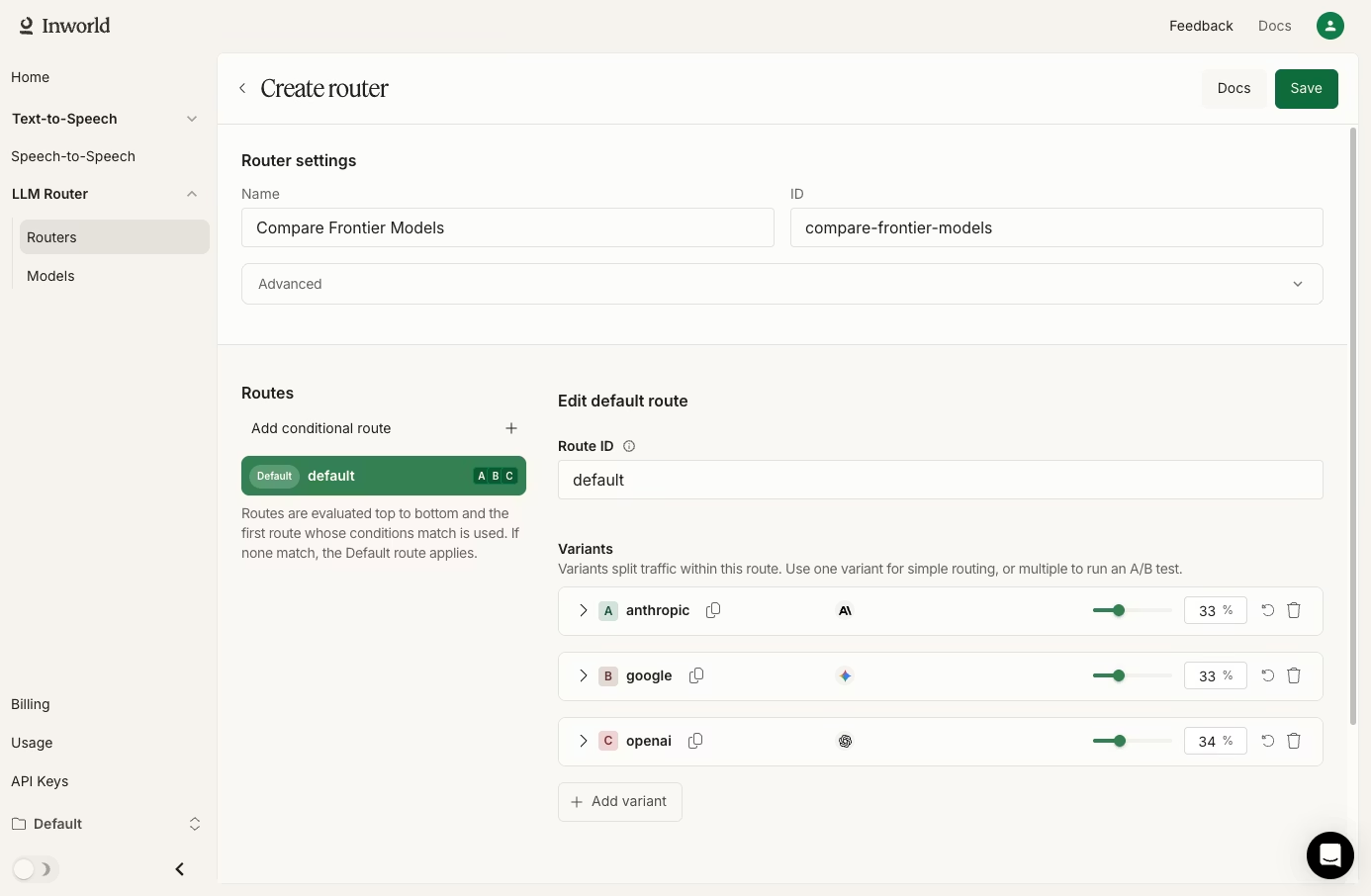

5. Inworld Router

Inworld Router is an LLM routing gateway that focuses on conditional routing, traffic splitting, and A/B testing across model variants. Inworld Router is in Research Preview and distinguishes itself by offering built-in support for combining LLM responses with text-to-speech output in a single API call.

Best for: Teams building voice-enabled AI applications that need LLM routing combined with text-to-speech in one request pipeline.

Pros:

- Conditional routing with traffic splitting by percentage and sticky user assignment

- Combined LLM + TTS pipeline reduces the need for separate speech synthesis calls

- OpenAI and Anthropic SDK compatibility

- No markup on provider rates during Research Preview

Cons:

- Still in Research Preview with a limited production track record

- Smaller model catalog compared to more established gateways

- No built-in caching, cost tracking dashboards, or evaluation tooling

- Tightly coupled with Inworld AI's broader voice product suite

Pricing: Free during Research Preview with pass-through provider pricing.

6. Portkey

Portkey is a full-stack LLMOps platform that bundles an AI gateway with observability, guardrails, governance, and prompt management. Portkey's open-source gateway handles routing across 1,600+ models, and the enterprise tier adds features such as virtual key management, audit trails, and compliance controls.

Best for: Enterprise teams and platform engineers who need a gateway with built-in governance, guardrails, and compliance tooling for regulated industries.

Pros:

- Routes to 1,600+ models with automatic fallbacks, load balancing, and conditional routing

- Built-in guardrails for content moderation and output validation

- Open-source gateway with cloud-hosted and self-hosted deployment options

- SOC2 Type 2, ISO 27001, GDPR, and HIPAA compliance at the enterprise tier

Cons:

- Observability log caps on lower tiers restrict visibility at scale

- Advanced governance features like SSO, data residency, and custom BAAs require the Enterprise plan

- Log-based billing increases pricing complexity as request volume grows

Pricing: Free tier with 10K logs, paid plans start at $49/month. Enterprise pricing is custom.

Best LLM gateways for developers compared (2026)

| Tool | Starting price | Best for | Notable features |

|---|---|---|---|

| Braintrust Gateway | Free during beta + free tier with 1M trace spans and 10K evaluation scores | Developers and engineering teams building production AI applications who need a unified LLM gateway connected to evaluation, tracing, and quality monitoring in one platform | Cross-SDK compatibility, encrypted caching, tracing and evaluation workflows, evaluation on production traffic, CI/CD regression checks, custom providers, and self-hosted model support |

| OpenRouter | Pay-as-you-go with prepaid credits | Individual developers and small teams who want fast access to a large catalog of models without managing provider accounts individually | 500+ models across 60+ providers, routing variants for speed and cost, automatic provider fallbacks |

| LiteLLM | Free and open-source for self-hosted use | Engineering teams with DevOps capabilities who need a self-hosted gateway with full infrastructure control | Open-source and self-hosted, 100+ models across major providers, virtual API keys, budget tracking, and Braintrust integration |

| Helicone | Free tier with 10K requests/month | Teams that want an open-source LLM gateway with built-in request logging and usage analytics without setting up separate monitoring infrastructure | Automatic request logging and cost tracking, cloud-hosted and self-hosted deployment options, rate limiting, caching, and failover |

| Inworld Router | Free during Research Preview | Teams building voice-enabled AI applications that need LLM routing combined with text-to-speech in one request pipeline | Conditional routing, traffic splitting, sticky user assignment, combined LLM + TTS pipeline, OpenAI and Anthropic SDK compatibility |

| Portkey | Free tier with 10K logs | Enterprise teams and platform engineers who need a gateway with built-in governance, guardrails, and compliance tooling for regulated industries | 1,600+ models, guardrails, automatic fallbacks, and load balancing, cloud-hosted and self-hosted deployment options, enterprise compliance controls |

Upgrade your LLM gateway capabilities with Braintrust. Start free today.

Why Braintrust Gateway is the best for developers

Most LLM gateways handle routing and leave tracing, evaluation, and release checks to separate tools. Braintrust Gateway connects routed requests to Braintrust's evaluation and observability workflows, so developers can investigate a production issue, turn the failing trace into a test case, run an evaluation, and verify the fix without leaving the Braintrust platform.

Production AI teams at Notion, Stripe, Vercel, Zapier, Ramp, and Instacart rely on Braintrust to maintain model quality at scale. Braintrust's free tier includes 1M trace spans and 10K evaluation scores per month, and the gateway is free during beta, giving teams enough room to test routing, tracing, and evaluation workflows in real use before moving to a paid plan. Start using Braintrust Gateway for free to route, trace, and evaluate LLM models in one platform.

How we chose the best LLM gateways

Selecting the right LLM gateway depends on how well it fits into the development workflow without adding unnecessary complexity. We evaluated each gateway against six criteria.

- API compatibility: The gateway should support OpenAI-compatible request formats at a minimum, with support for Anthropic and Google SDKs as an added advantage.

- Provider coverage: Access to major model providers gives teams the flexibility to switch models without changing gateways.

- Caching and cost management: Built-in response caching and per-request cost tracking help reduce costs, especially during development and evaluation work.

- Observability integration: Gateways that log requests and connect that data to tracing and evaluation tools reduce the need for custom logging pipelines.

- Deployment flexibility: Some teams need a managed cloud gateway, while others need self-hosted or hybrid deployment options to meet compliance, control, or data residency requirements.

- Pricing transparency: Clear pricing without hidden markups or complex usage models makes costs easier to forecast as request volume grows.

FAQs: best LLM gateways for developers

What is an LLM gateway?

An LLM gateway provides developers with a single control layer to work across multiple model providers. Instead of managing separate authentication, request schemas, failover logic, and billing patterns for each provider, teams route requests through one endpoint. Braintrust Gateway also connects routed traffic to tracing and evaluation inside the same platform.

How do I choose the right LLM gateway?

Choose based on the production workflow you need to support. Some gateways focus on provider access and routing, while others also support caching, tracing, evaluation, deployment control, and pricing visibility. Braintrust Gateway is the strongest fit when routing needs to work alongside evaluation and observability in a single platform.

Which is the best LLM gateway?

Braintrust Gateway is the best LLM gateway for developer teams that need routing, tracing, evaluation, encrypted caching, and production-quality workflows unified on a single platform.

Is Braintrust Gateway better than OpenRouter?

Braintrust Gateway and OpenRouter both provide OpenAI-compatible APIs with multi-provider access, but they serve different needs. OpenRouter focuses on broad model access and pay-as-you-go billing, while Braintrust Gateway connects routing directly to evaluation, tracing, and caching within a single platform. Teams building production applications benefit from Braintrust's integrated workflow because it reduces the number of tools needed to maintain model quality.

How do I get started with an LLM gateway?

Start free with Braintrust Gateway by pointing your existing OpenAI, Anthropic, or Google SDK to the Braintrust gateway URL, adding a Braintrust API key, and configuring provider credentials in the Braintrust dashboard. Braintrust Gateway then lets you route requests across providers through the same integration in minutes.