Braintrust is the strongest PromptLayer alternative for teams that need evaluation to shape what reaches production, with trace-level scorers, CI/CD quality gates, and AI-powered optimization through Loop.

Other top alternatives:

- LangSmith - Best fit for teams already building on LangChain or LangGraph and want tracing and prompt management inside that stack.

- Maxim AI - Stands out for teams that rely on human review and want structured evaluation workflows.

- Galileo - Well-suited for teams that prioritize packaged guardrails and runtime protection.

- W&B Weave - Fits teams already using Weights & Biases and want to extend ML experiment tracking into LLM tracing and evaluation.

- Fiddler AI - Serves compliance-heavy enterprises in regulated industries that need unified ML and LLM monitoring with audit-ready governance.

PromptLayer gives teams a solid prompt registry, version control, and a visual editor that lets non-technical collaborators iterate on prompts without writing code. For teams primarily focused on prompt versioning and prompt editing, PromptLayer is a strong fit.

But many teams that started with prompt versioning later need evaluation infrastructure in addition to prompt management. They need trace-level scoring, CI/CD quality gates that catch regressions before deploy, and a way to turn production signals into reusable eval datasets. Prompt management is one part of the LLM development workflow, but teams responsible for rigorous evaluation need evaluation-ready infrastructure. This guide covers six PromptLayer alternatives for LLM evaluation teams, with Braintrust as the strongest fit for teams that want to catch quality issues before users see them.

Why teams look for PromptLayer alternatives

PromptLayer versions every LLM call, provides a visual editor for non-technical stakeholders, supports A/B testing between prompt versions, and runs scheduled regression tests. Teams that need a prompt CMS with deployment controls get a well-designed product built for prompt management workflows.

Production teams start looking for alternatives when LLM evaluation becomes the main requirement. PromptLayer tracks prompt performance through cost, latency, and usage metrics, but does not support trace-level scoring with LLM-as-a-judge, code-based, or human evaluators across every span in a multi-step chain. CI/CD quality gates are missing, so prompt changes cannot be automatically blocked when scores fall below a threshold, and evaluation work often lives in ad hoc notebooks and scripts, disconnected from shared infrastructure.

Teams looking for PromptLayer alternatives are usually not trying to replace prompt versioning but to add evaluation infrastructure that can enforce quality before release.

6 best PromptLayer alternatives in 2026

1. Braintrust

Best for evaluation-first teams that need the full path from production behavior to release decisions.

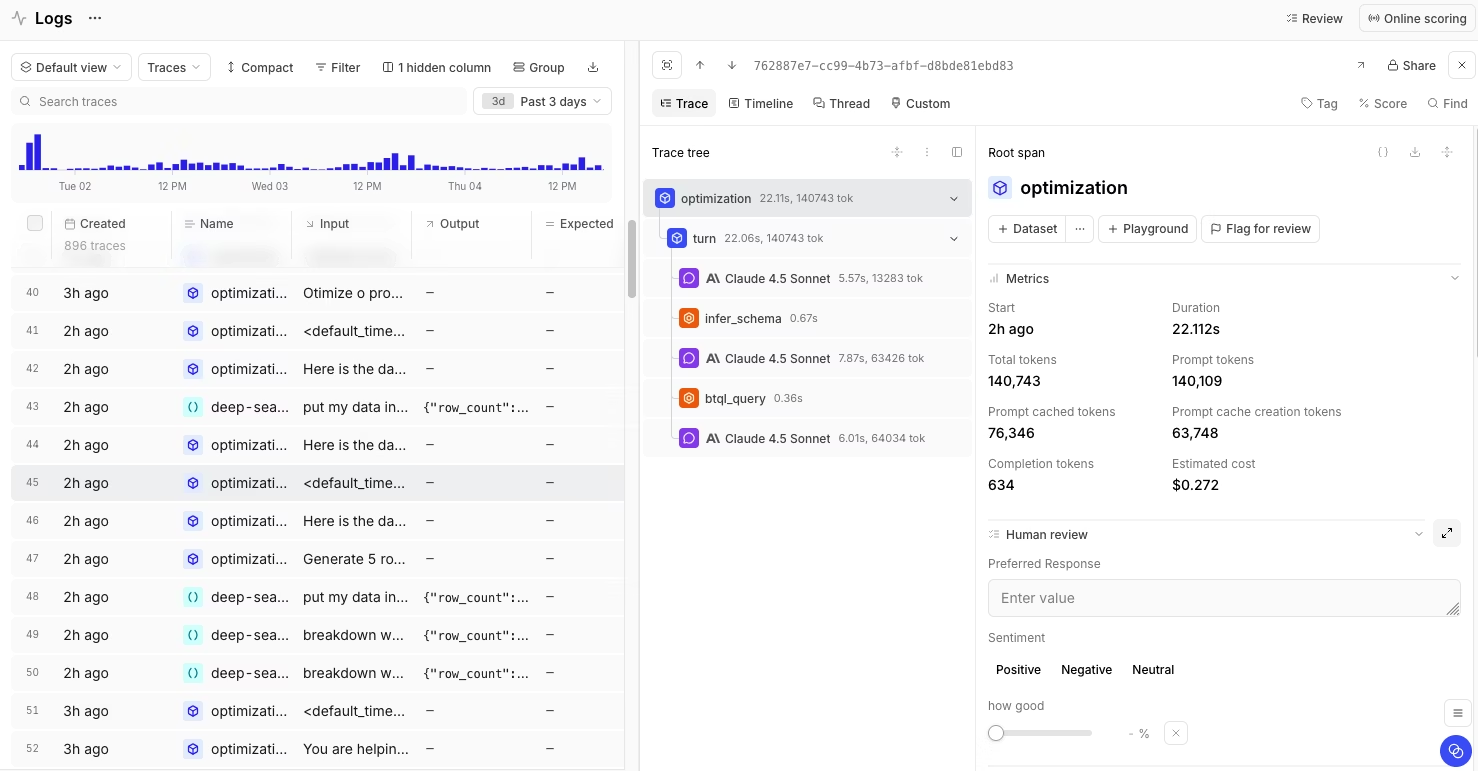

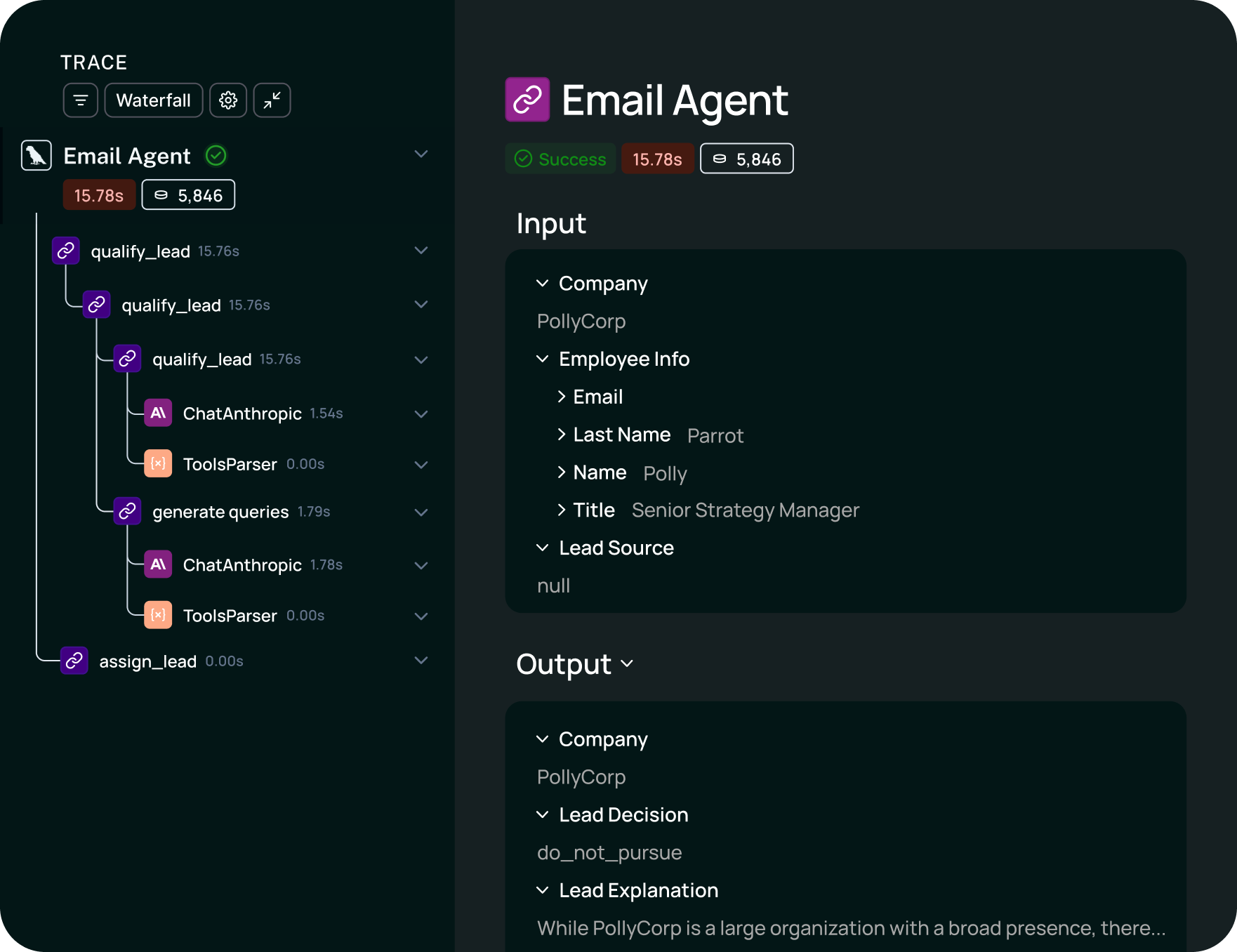

Braintrust connects every stage of the LLM development lifecycle within a single platform, from logging production traces to running evaluations in CI to reviewing outputs with human annotators. Where PromptLayer versions and deploys prompts, Braintrust measures whether those prompts actually work in production and blocks the ones that don't before they ship.

The evaluation system supports three scoring methods that run at the trace and span level:

LLM-as-a-judge scorers let teams define quality criteria in natural language.

Code-based scorers handle deterministic checks like format validation or keyword matching.

Human review workflows enable domain experts to annotate outputs via configurable interfaces tailored to their specific tasks.

All three scoring types feed into the same evaluation framework, so teams can combine automated and human judgment in a single eval run. Braintrust also supports offline evals on test datasets and online evals on live production traffic, so teams can evaluate changes before release and monitor quality after deployment inside the same system.

CI/CD quality gates, via native GitHub Actions, run evaluations automatically on every prompt or model change. If scores drop below a defined threshold, the release is blocked. Teams that previously relied on manual review before shipping prompt changes can enforce quality standards programmatically, a practice that becomes necessary as the team and the number of prompts scale.

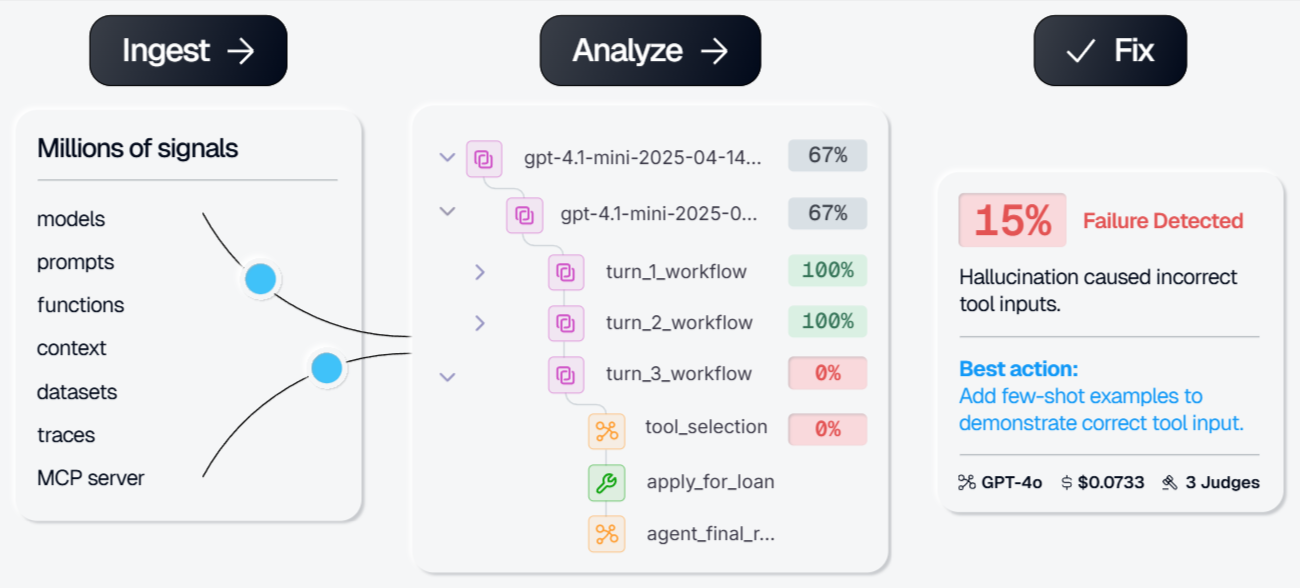

Loop, Braintrust's AI agent, generates test datasets, creates scorers, and iterates on prompts based on natural language instructions. Instead of manually writing evaluation cases, teams describe what they want to optimize, and Loop produces the datasets and scoring functions for testing. Production traces become evaluation test cases with one click through Brainstore, so real-world failures feed directly into the next eval cycle. Teams can also use the Playground to compare prompts, models, and outputs side by side with real traces and evaluation results, making iteration easier without moving work into a separate tool.

The Braintrust Gateway routes requests across providers, including OpenAI, Anthropic, Google, AWS, and others, with built-in caching, observability, and multi-provider support. Braintrust is used by leading AI teams at Notion, Stripe, Vercel, Zapier, Airtable, Instacart, and Ramp. Notion reported increasing its issue-resolution rate from 3 fixes per day to 30 fixes per day after implementing systematic evaluation with Braintrust.

Pros

- Trace-level scoring with LLM-as-a-judge, code-based, and human evaluators in one framework

- CI/CD quality gates through native GitHub Actions block regressions before deploy

- Loop automates dataset generation, scorer creation, and prompt iteration from natural language

- Production traces convert to eval test cases in one click through Brainstore

- Supports offline evals in development and online evals on production traffic

- Playground supports side-by-side comparison of prompts, models, and outputs

- Generous free tier with unlimited users

- Braintrust Gateway supports multi-provider routing, caching, and built-in observability

Cons

- Teams focused only on prompt versioning may find the evaluation workflow broader than they need

- Self-hosting is only available on the Enterprise plan

Pricing

Free tier with 10K scores. Pro plan at $249/month. Enterprise plan with custom pricing, self-hosting, and dedicated support. See pricing details.

2. LangSmith

Best for teams building on LangChain or LangGraph.

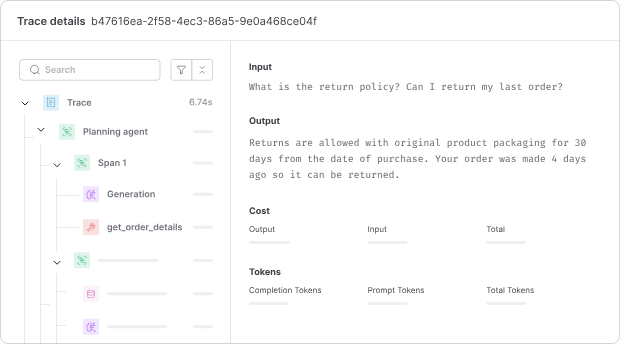

LangSmith is designed for teams working inside the LangChain ecosystem and combines tracing, evaluation, and prompt management around that stack. Teams using LangChain or LangGraph can integrate prompt versioning, execution traces, and evaluation datasets directly into their existing framework, reducing setup work compared with more framework-agnostic tools. LangSmith also captures nested traces across chains, agents, and tool calls, and supports dataset-based testing with custom metrics and LLM-based scoring.

Pros

- Native LangChain and LangGraph integration

- Nested trace visualization for multi-step workflows

- Dataset-based evaluation with LLM-based scoring

- Prompt Hub for versioning and sharing prompts

Cons

- Best fit depends heavily on the LangChain ecosystem

- Less suitable for teams that want framework-agnostic evaluation workflows

Pricing

Free tier with 5,000 traces/month. Paid plan starts at $39 per user/month. Enterprise pricing with self-hosting available on request.

See how Braintrust and LangSmith compare in our detailed deep dive.

3. Maxim AI

Best for teams that need human review workflows and structured evaluation across multiple evaluator types.

Maxim AI is built for teams that want multiple evaluation methods in one system, including deterministic checks, statistical scoring, LLM-as-a-judge, and human review. The multi-eval workflow can suit teams running multi-step agent workflows, where different parts of the system require different evaluation methods. Maxim also includes simulation workflows, observability features, and collaboration features for reviewers outside engineering.

Pros

- Deterministic, statistical, LLM-as-a-judge, and human evaluators in one system

- Simulation workflows for scenarios and personas

- Observability features with dashboards and monitoring

- Collaboration features for technical and non-technical reviewers

Cons

- Broader configuration surface can make the setup more involved

- Smaller ecosystem than larger evaluation platforms

Pricing

Free plan with 10K logs per month, paid plan starts at $29/seat/month. Custom enterprise pricing.

4. Galileo

Best for teams that need packaged guardrails and runtime protection alongside evaluation.

Galileo is designed primarily for runtime protection and packaged quality checks, with less emphasis on release-control workflows. Luna-2 models run evaluation metrics at low latency, which makes Galileo a fit for teams that want guardrails operating on live traffic. Galileo includes out-of-the-box coverage for issues such as hallucination, prompt injection, PII leakage, toxicity, and context adherence, and Signals helps surface recurring failure patterns across production traces.

Pros

- Low-latency guardrail scoring for live traffic

- Packaged coverage for common LLM risk categories

- Signals help surface recurring failure patterns

- Supports SaaS, VPC, and on-premises deployment

Cons

- More focused on runtime protection than release gating

- Smaller teams may face a slower buying process at higher tiers

Pricing

Free tier with 5,000 traces/month. Paid plan starts at $100/month. Custom enterprise pricing.

5. Weights & Biases Weave

Best for teams already standardized on W&B for ML experiment tracking.

Weave extends Weights & Biases into LLM tracing and evaluation, so it fits teams that already use W&B for ML workflows and want LLM work in the same environment. Weave supports lightweight instrumentation, logs model calls and trace data, and includes evaluation features such as custom scorers, LLM-as-a-judge, and leaderboards for comparing configurations. Weave's unified workspace works well for experimentation and tracking across ML and LLM systems.

Pros

- Fast setup for Python workflows

- Unified workspace for ML experiments and LLM traces

- Evaluation leaderboards for comparing configurations

- Python and TypeScript SDK support

Cons

- No native CI/CD quality gates for blocking releases on eval scores

- Better aligned with experimentation than release-control workflows

Pricing

Free tier with limited seats, storage, and ingestion. Paid plans start at $60 per month. Enterprise pricing available on request.

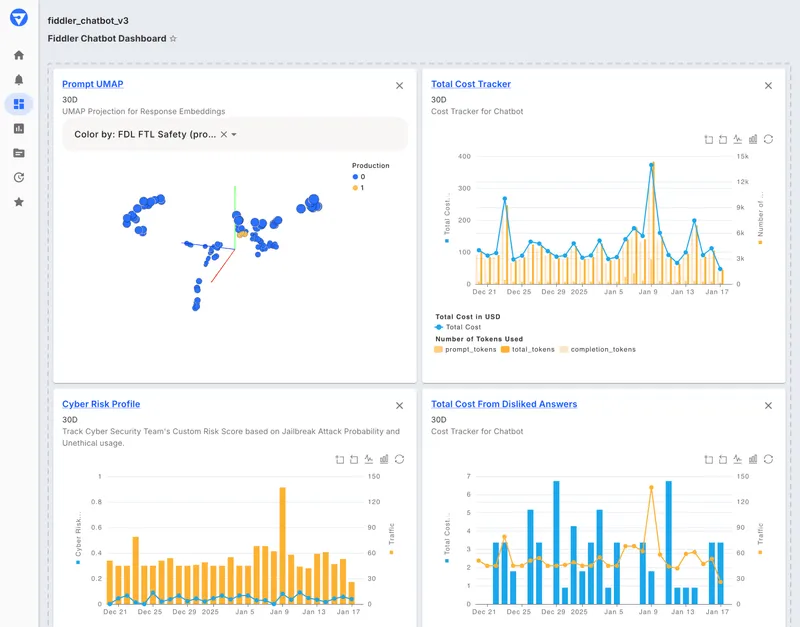

6. Fiddler AI

Best for compliance-heavy enterprises in regulated industries.

Fiddler AI is designed for organizations that need to monitor and govern both traditional ML systems and LLM applications. Fiddler emphasizes explainability, bias detection, compliance reporting, and deployment options for strict data-handling requirements, including air-gapped environments. Fiddler also includes scoring for common LLM risk categories such as hallucination, toxicity, PII leakage, and prompt injection, along with root cause analysis and drift monitoring.

Pros

- Unified monitoring for ML and LLM systems

- Built-in scoring for common LLM risk categories

- Governance features for bias detection and explainability

- Air-gapped and on-premises deployment options

Cons

- More focused on governance and monitoring than evaluation tied to release workflows

- Enterprise-oriented packaging is less suited to smaller teams

Pricing

Free guardrails plan with limited functionality. Custom pricing for full AI observability and enterprise features.

Best PromptLayer alternatives compared (2026)

| Tool | Best for | Core strength | Main limitation | CI/CD gates | Eval depth | Tracing | Starting price |

|---|---|---|---|---|---|---|---|

| Braintrust | Evaluation-first teams | Evaluation tied directly to release decisions | Self-hosting limited to Enterprise | Native GitHub Actions | LLM, code, and human scorers in one framework | Full multi-step traces with span-level evaluation | Free to start |

| LangSmith | LangChain-native teams | LangChain ecosystem integration | Less integrated outside LangChain | Limited | Dataset-based evals | Nested execution trees | Free tier available |

| Maxim AI | Human review workflows | Multi-evaluator framework | Broader setup and smaller ecosystem | Limited | Deterministic, statistical, LLM, human | Distributed tracing | Free tier available |

| Galileo | Runtime guardrails | Low-latency packaged guardrails | Less focused on eval tied to release workflows | Limited | 20+ built-in metrics | Production trace analysis | Free tier available |

| W&B Weave | W&B-standardized ML teams | Unified ML + LLM tracking | No native release gating | No | Custom scorers, leaderboards | Function-level instrumentation | Free tier available |

| Fiddler AI | Regulated enterprise governance | Compliance and explainability | Less suited to evaluation workflows tied to release decisions | No | Trust Model scoring | Hierarchical span analysis | Free tier available |

Ready to move from prompt ops to evaluation infrastructure? Start free with Braintrust and ship your first CI/CD quality gate today.

Why Braintrust is the best PromptLayer alternative

Braintrust is the strongest PromptLayer alternative for teams that need evaluation to determine what reaches production. PromptLayer is built around prompt management, while Braintrust is built around evaluation, release control, and continuous improvement from production behavior.

Braintrust gives teams one system for evaluating changes before release, monitoring quality in production, reviewing outputs with human annotators, and turning production failures into reusable tests. Teams can keep the full quality workflow, from prompt management through evaluation, inside one platform.

Leading AI teams, including Notion, Stripe, Vercel, and Zapier, use Braintrust in production. Teams that tie evaluation to release decisions catch problems before users do. Start free with Braintrust or schedule a demo.

When PromptLayer is still the right choice

PromptLayer remains a reasonable choice for teams whose workflow centers on prompt registry, versioning, and deployment. The visual editor is useful in organizations where product managers, copywriters, or domain experts need to iterate on prompts without engineering support. PromptLayer can also work for smaller teams focused on prompt operations that do not yet need dedicated evaluation infrastructure.

FAQs: Best PromptLayer alternatives in 2026

What is an LLM evaluation platform?

An LLM evaluation platform measures output quality through automated scoring, human review, and regression testing. Teams use eval platforms to evaluate outputs against datasets with methods such as LLM-as-a-judge, code-based checks, and human annotation. Braintrust brings scoring, CI workflows, and production observability into the same system, so teams can measure quality before release and monitor it in production.

What is the difference between prompt management and LLM evaluation?

Prompt management covers storing, versioning, deploying, and collaborating on prompts across a team. LLM evaluation measures whether prompts produce correct, safe, and reliable outputs through scoring, regression testing, and human review. Prompt management answers which prompt is live, while LLM evaluation answers whether the prompt is performing well. Braintrust connects the two by tying prompt changes to evaluation results and release decisions.

What is the best PromptLayer alternative for LLM evaluation?

Braintrust is the strongest PromptLayer alternative for teams focused on LLM evaluation. Braintrust supports trace-level scoring, CI/CD quality gates, production observability, and AI-assisted optimization. The free tier includes 10K evaluation scores and unlimited users, which gives teams enough room to test Braintrust against real workloads before moving to a paid plan.

Is Braintrust better than PromptLayer?

Braintrust and PromptLayer are built for different needs. PromptLayer focuses on prompt management, including versioning, visual editing, deployment, and collaboration for non-technical stakeholders. Braintrust focuses on evaluation and observability alongside prompt management, with infrastructure to measure AI quality, gate releases based on eval results, and turn production failures into reusable tests. Teams focused solely on prompt operations may find PromptLayer sufficient, while teams that need prompt management tied to evaluation to inform release decisions will usually find Braintrust a better fit.

Which PromptLayer alternative is best for LangChain teams?

LangSmith is the strongest fit for teams building on LangChain or LangGraph. LangSmith combines tracing, prompt versioning, and evaluation datasets within that ecosystem, reducing setup work for teams already committed to those frameworks. Teams that want CI/CD quality gates and framework-agnostic evaluation infrastructure should consider Braintrust.

Which PromptLayer alternative is best for enterprise governance?

Fiddler AI is the strongest fit for enterprises in regulated industries that need compliance-focused monitoring and governance. Fiddler provides ML and LLM monitoring, explainability, bias detection, fairness analysis, and deployment options for strict data-handling requirements. Teams that also need evaluation tied to release decisions may find Fiddler less complete, since it is built more around governance and monitoring than CI-based evaluation workflows. For teams that need governance alongside release control, Braintrust is the stronger choice.