Datadog LLM observability alternatives (2026): Better tools for AI quality

Braintrust is the best overall Datadog alternative for teams that want evaluations, CI/CD quality gates, and production feedback loops in a single workflow.

Other top alternatives:

- LangSmith - Strongest fit for teams already invested in the LangChain ecosystem.

- Galileo - Packaged real-time guardrails powered by fast, low-cost evaluation models.

- W&B Weave - Best for organizations already using Weights & Biases for ML experiment tracking.

- Fiddler AI - Governance-heavy enterprises that need unified monitoring across traditional ML and LLM systems, plus compliance reporting.

Pick Braintrust if you need evaluation results to block regressions before deployment.

Monitoring AI systems is not the same as improving AI quality

Datadog does a good job helping teams monitor LLM systems with traces, latency tracking, token cost breakdowns, and dashboards that connect LLM performance to the rest of the infrastructure stack. For teams already using Datadog across their systems, adding LLM observability feels like a natural extension.

Datadog shows what happened inside an LLM pipeline, but it does not determine whether the output was actually good or help teams improve output quality systematically over time. Teams that need evals, CI/CD quality gates, regression prevention, and production feedback loops need a platform built for AI quality, not just monitoring.

This guide covers the five strongest Datadog alternatives for LLM observability, with Braintrust as the top recommendation for teams that want evaluation results to block regressions before deployment.

Why teams look for Datadog LLM observability alternatives

Datadog starts from dashboards, not from evals

Datadog's LLM Observability product extends the same monitoring-first approach Datadog uses for infrastructure and APM. Teams instrument the application, trace the flow into Datadog, and dashboards track latency, error rates, and token costs. Datadog's monitoring-first approach assumes visibility into system behavior is enough to diagnose and fix problems, which works for traditional infrastructure but breaks down for AI because an LLM can return a fast, error-free response that is completely wrong.

AI quality requires output-level evaluation

A 200ms response with zero errors can still hallucinate, ignore the system prompt, or produce an answer that is technically correct but useless to the end user. Datadog Experiments allows teams to create datasets from production traces and run evaluations. But evaluations in Datadog remain adjacent to the monitoring workflow. Teams that treat AI quality as a primary release concern often outgrow Datadog's evaluation capabilities quickly.

Missing workflows for continuous AI improvement

When a team finds a bad output in production, the team needs to turn the trace into a test case, run the test case through an eval suite, iterate on prompts or model settings, verify the fix in CI, and confirm the improvement holds in production. Datadog does not connect these steps into a single workflow. Teams end up exporting data, manually recreating scenarios, and relying on separate tools for evaluation and experimentation. The extra handoffs slow iteration and make it harder to maintain regression prevention.

5 best Datadog LLM observability alternatives in 2026

1. Braintrust

Best for AI SaaS teams that want to measure and improve AI output quality, alongside LLM monitoring.

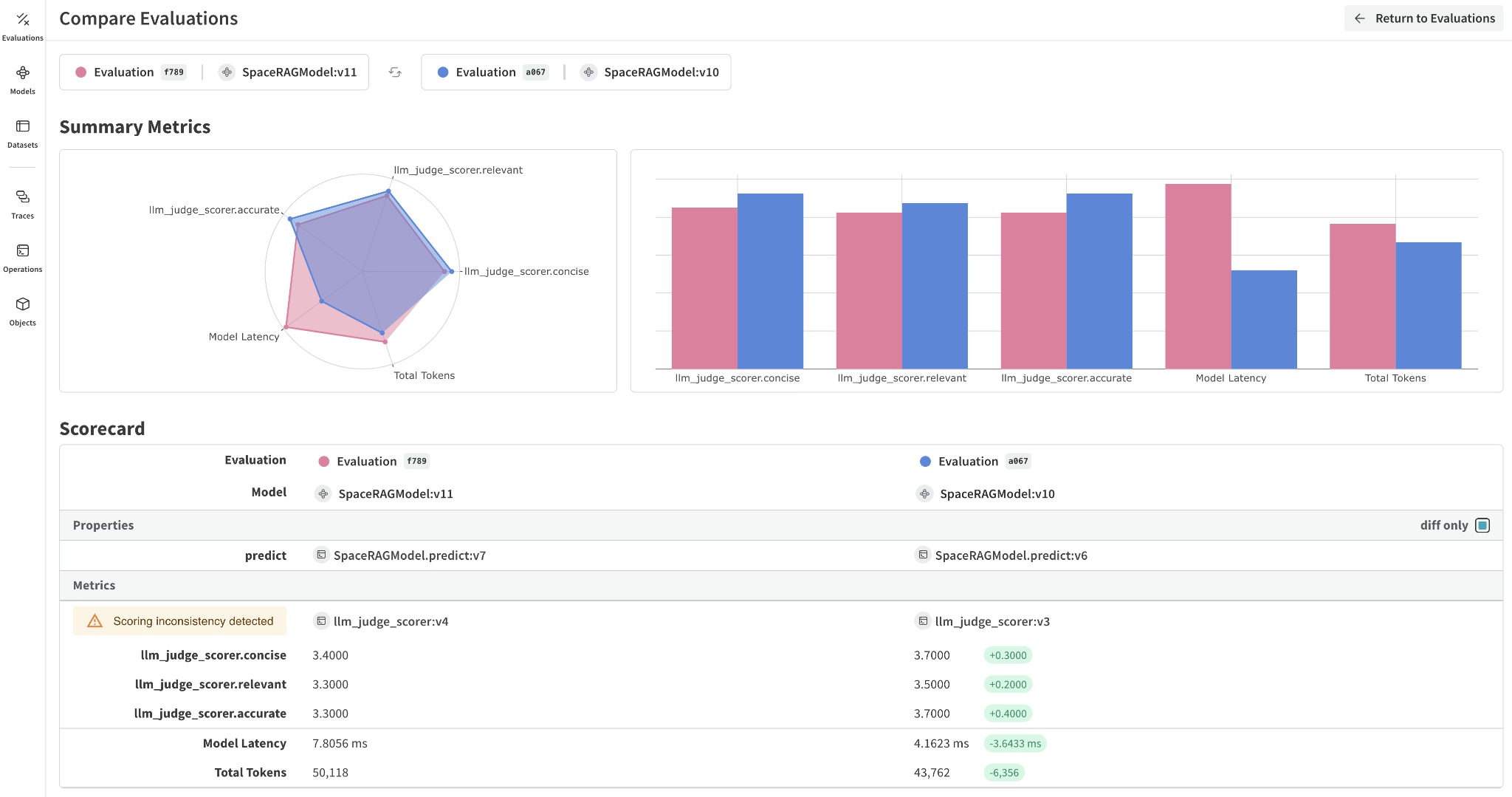

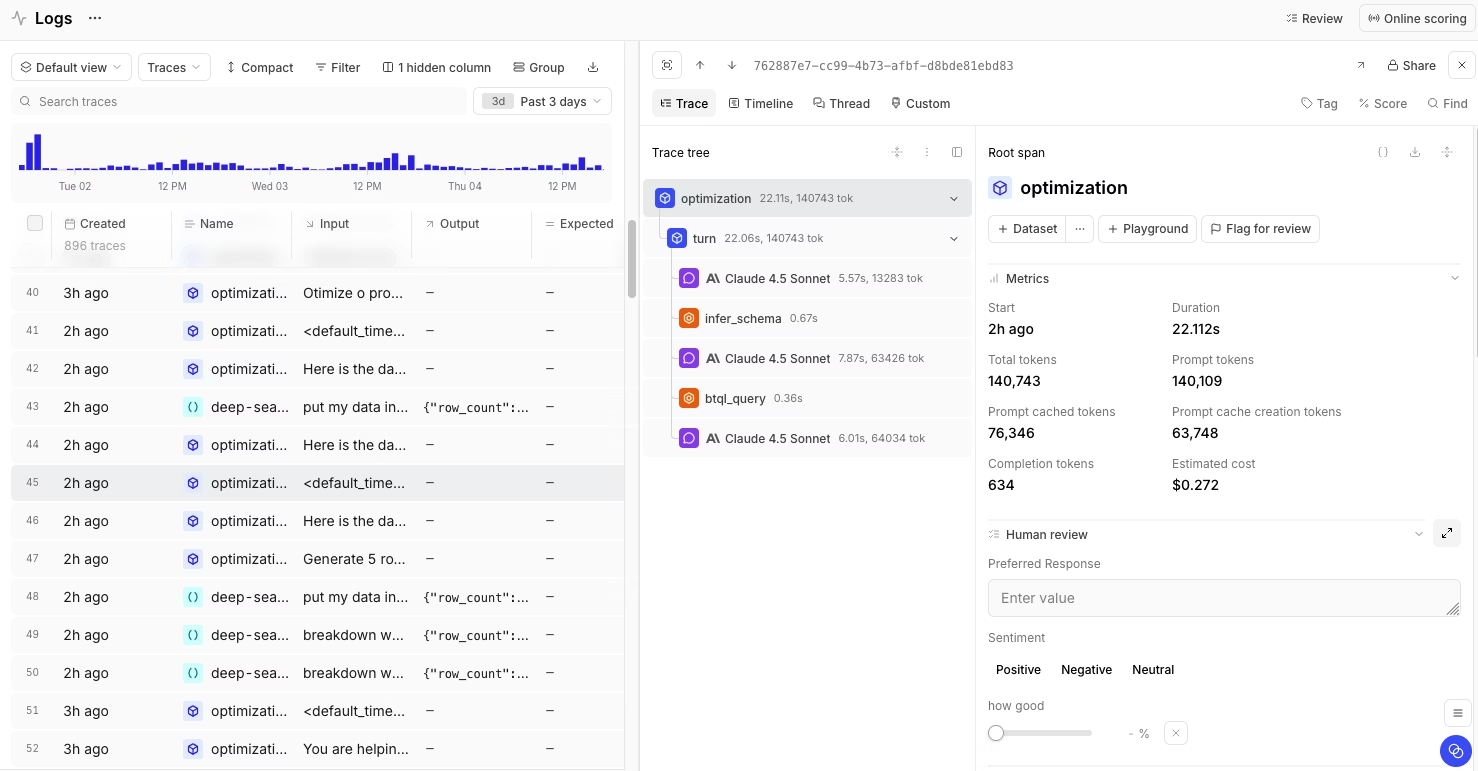

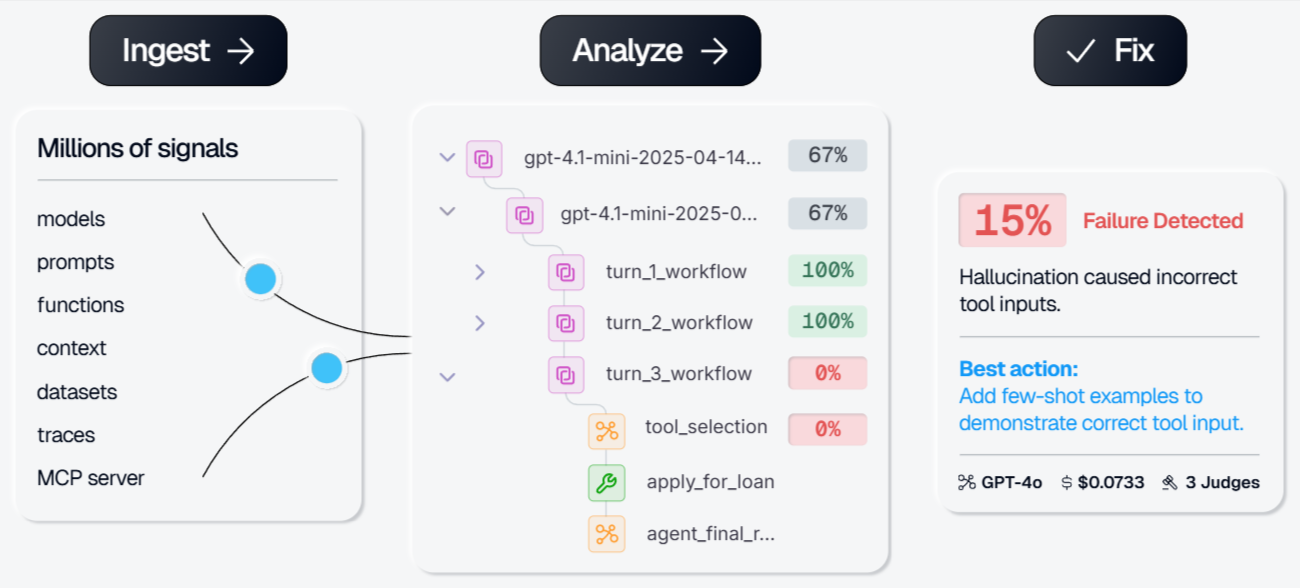

Braintrust is an AI observability and evaluation platform that treats evals as the center of the development workflow rather than a monitoring add-on. Braintrust captures detailed traces across multi-step LLM and agent workflows. Every trace logs duration, token counts (including cached and reasoning tokens), tool calls, errors, and estimated cost. Unlike Datadog, where traces feed dashboards primarily, Braintrust traces feed directly into evaluations. Any production trace can become a test case with a single click, and eval results appear on every pull request through CI/CD quality gates. Teams can block deployments when scores regress, turning evaluation into an automated release requirement instead of a manual review step.

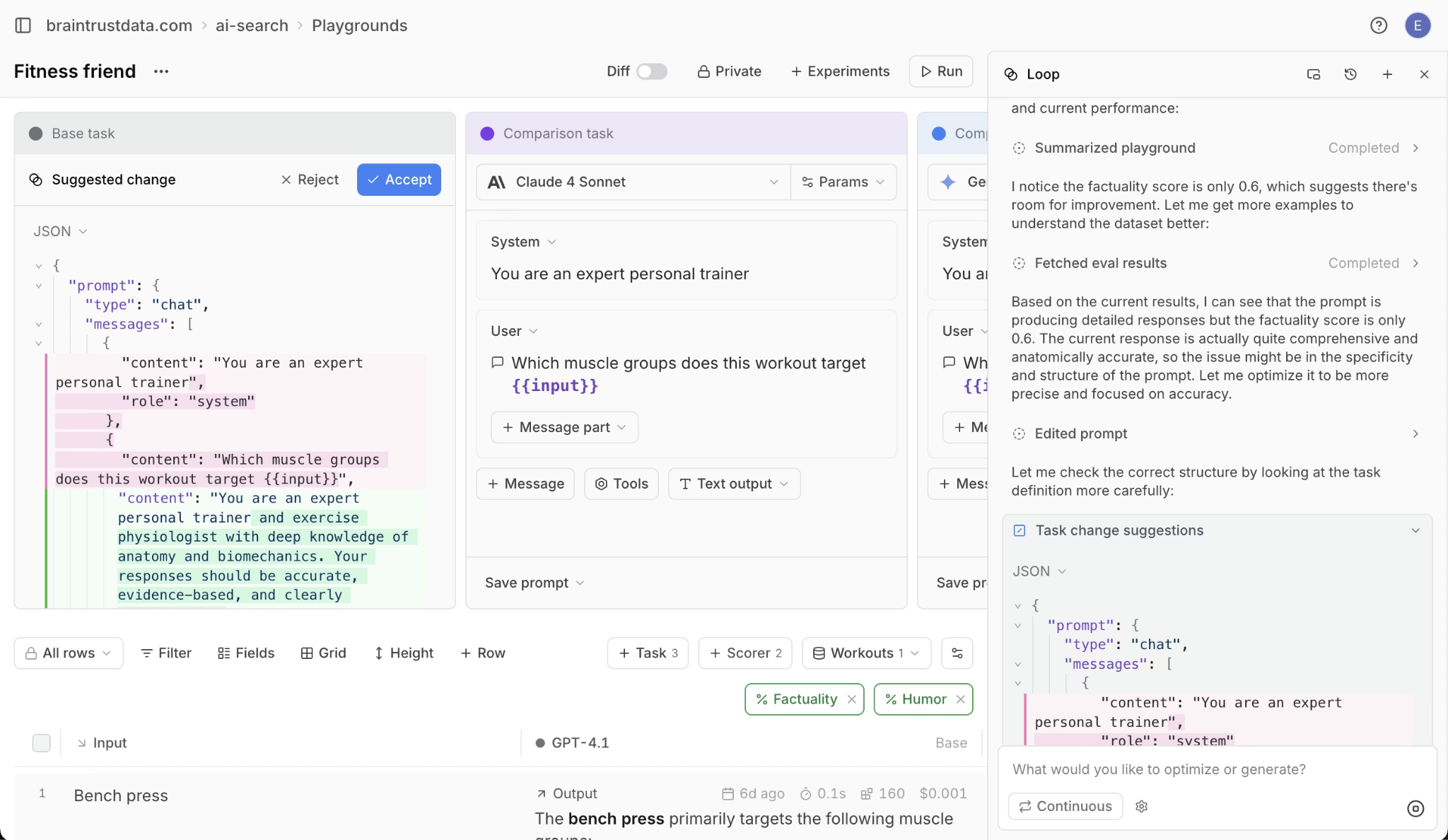

The evaluation system supports offline evals for pre-deployment testing, online scoring on live traffic, LLM-as-a-judge scoring, custom-code scorers, and configurable human-review workflows. Teams can iterate in shared playgrounds, compare prompts, models, or retrieval settings side by side, and promote stronger configurations into experiments and CI workflows. Loop, Braintrust's built-in AI agent, helps teams generate better prompts, scorers, and datasets tailored to the optimization goal.

Brainstore, Braintrust's dedicated database for AI observability, is designed to handle the data patterns generated by LLM traces. AI traces are significantly larger than traditional observability records, with a typical trace reaching 10 MB and traces reaching tens of GB. LLM traces also continue to change after initial ingestion, as automated judges add scores and human reviewers add annotations. Brainstore supports arbitrary queries over traces, immediate visibility for late-arriving spans, and full-text search across millions of records. Teams can also deploy Brainstore on their own infrastructure to meet data residency requirements.

Braintrust's CLI allows teams to run evals, manage datasets, and push prompt changes from the command line, which fits existing development workflows without forcing engineers into the UI for every task.

Pros

- Evals, tracing, experiments, and CI/CD quality gates with GitHub Action support for posting eval results on pull requests

- Supports offline evals before deployment and online scoring on production traffic

- Built-in scorers with flexible scorer types, including custom code scorers, LLM-as-a-judge scoring, and human review workflows

- Shared playgrounds for comparing prompts, models, and retrieval settings during team review

- One-click workflow for turning any production trace into a test case

- Loop agent for generating improved prompts, scorers, and test cases

- Brainstore supports AI-scale trace data, including late-arriving annotations and full-text search

- Unlimited users on all plans, including the free tier, so pricing scales with data volume rather than seat count

- SOC 2 Type II, HIPAA compliance, SSO, RBAC, and hybrid deployment options on Enterprise

Cons

- Teams evaluating only basic LLM monitoring may not need the full evaluation and release workflow

- Enterprise features like custom data retention, S3 export, and BAA require the Enterprise plan

Pricing

Braintrust's pricing is usage-based with no per-seat charges. The free plan includes 1M trace spans, 10K scores, and unlimited users. Paid plans start at $249/month, with custom enterprise pricing available. See pricing details.

2. LangSmith

Best for teams building with LangChain or LangGraph that want native tracing and debugging.

LangSmith is the observability and evaluation platform from the LangChain team. If your application is built on LangChain or LangGraph, setup takes a single environment variable, and LangSmith automatically captures traces that understand LangChain's internal chain and agent structures. LangSmith supports dataset-based evaluations, annotation queues, and prompt versioning. LangSmith works well as a debugging and evaluation tool within the LangChain ecosystem, but teams using other frameworks or building framework-agnostic applications will find the integration less seamless. The per-seat pricing model also makes costs scale linearly with team size, which can become expensive for larger organizations.

Pros

- Native LangChain/LangGraph integration with automatic trace capture

- Dataset-based evaluations with annotation queues for human review

- Prompt versioning and A/B testing support

- LangSmith Deployment for managed agent hosting

Cons

- Per-seat pricing adds up for larger teams

- Strongest value is tied to the LangChain ecosystem, with less differentiation for framework-agnostic teams

Pricing

Free tier with 5,000 traces/month. Paid plan starts at $39 per user/month. Enterprise pricing with self-hosting available on request.

See how Braintrust and LangSmith compare in our detailed deep dive.

3. Galileo

Best for teams that need packaged real-time guardrails.

Galileo is an AI evaluation and observability platform built around Luna-2, a family of small models tuned for evaluation tasks. Galileo fits best for teams that want packaged guardrails for hallucinations, prompt injection, PII leaks, and related risks, especially when broad production coverage matters.

Pros

- Luna-2 evaluators run at sub-200ms latency, enabling real-time guardrails on production traffic

- Built-in evaluation metrics for hallucination, toxicity, PII, prompt injection, and related risks

- Agent Control open-source governance framework for multi-agent systems

- VPC and on-premises deployment for regulated industries

Cons

- Enterprise pricing requires a sales conversation, which makes cost evaluation slower for smaller teams

- Less emphasis on iterative evaluation and development workflows than platforms centered on release gating

Pricing

Free tier with 5,000 traces/month. Paid plan starts at $100/month. Custom enterprise pricing.

4. W&B Weave

Best for teams already using Weights & Biases for ML experiment tracking.

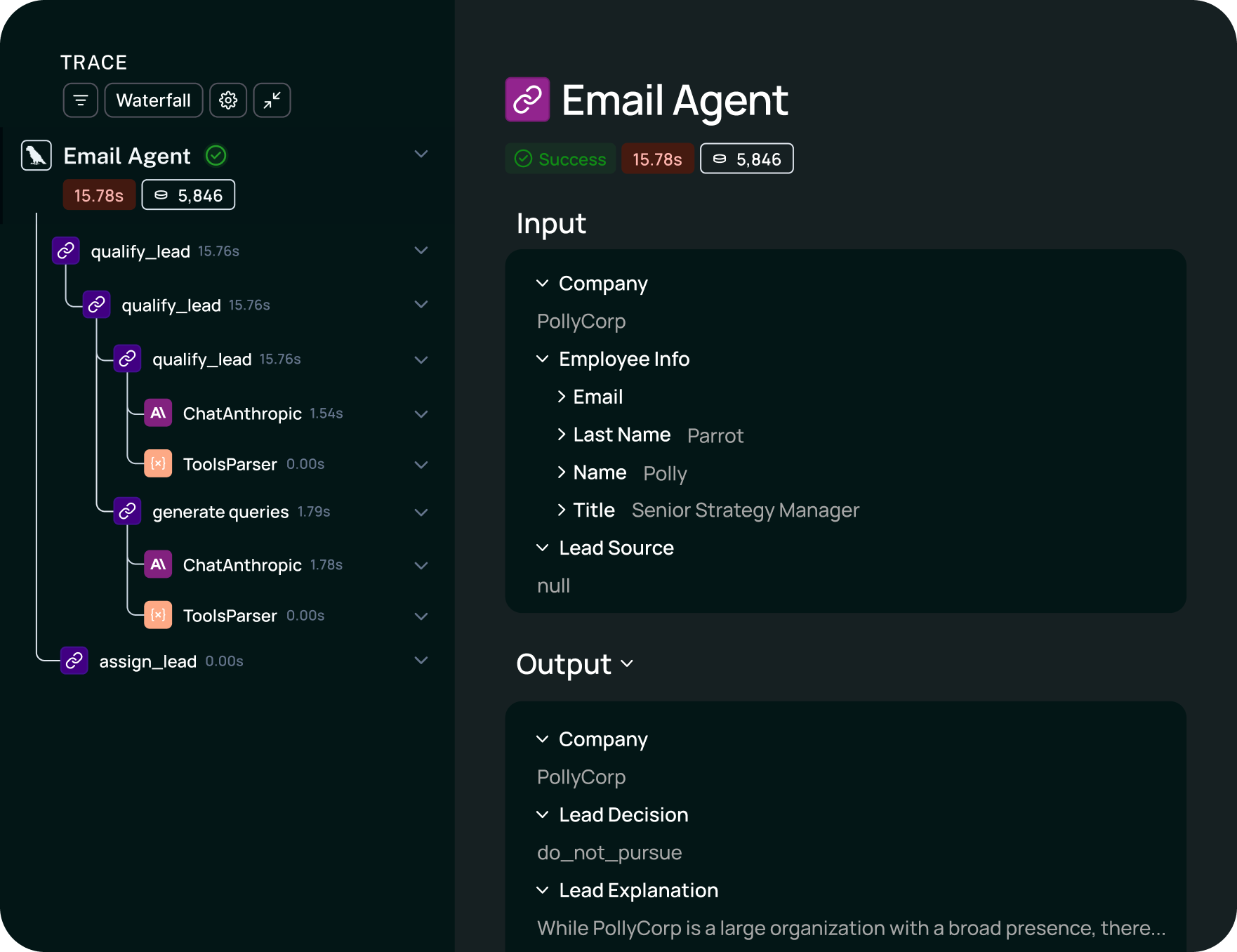

W&B Weave extends the Weights & Biases platform into LLM observability and evaluation. Weave captures structured traces using a simple @weave.op decorator, automatically logs inputs, outputs, costs, and latency, and organizes everything into trace trees that show the full execution path of multi-step workflows. Teams that already use W&B for ML experiment tracking, model versioning, and artifact management can add LLM observability without introducing a new vendor or learning a new interface.

Pros

- Integration with the broader W&B ecosystem for ML workflows

- Simple instrumentation with the @weave.op decorator and OpenTelemetry support

- Evaluation scoring and dataset management in the same interface

- Open-source components available on GitHub

Cons

- Per-seat pricing scales directly with team size

- Less mature than dedicated AI quality platforms for production-focused evaluation workflows

Pricing

Free tier with limited seats, storage, and ingestion. Paid plans start at $60 per month. Enterprise pricing available on request.

5. Fiddler AI

Best for governance-heavy enterprises running both traditional ML and LLM workloads.

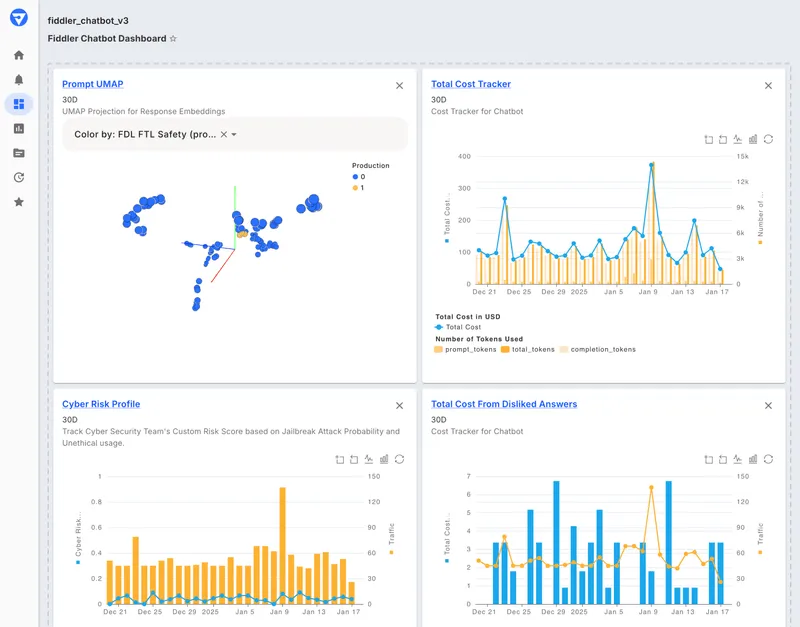

Fiddler AI is an enterprise AI observability and security platform for traditional ML models, LLM applications, and multi-agent systems. Fiddler fits best for organizations where explainability, fairness monitoring, compliance reporting, and unified oversight across predictive and generative AI are primary requirements.

Pros

- Unified monitoring for ML models, LLMs, and agents in one platform

- Explainability features, including feature importance, counterfactual analysis, and fairness metrics

- Built-in metrics covering hallucination, toxicity, PII, drift, and business KPIs

- SaaS, VPC, and on-premises deployment with SOC 2 and HIPAA compliance

Cons

- Enterprise-focused pricing is not publicly listed, which slows down evaluation for smaller teams

- Governance-first design may add complexity for teams focused mainly on AI quality iteration

Pricing

Free guardrails plan with limited functionality. Custom pricing for full AI observability and enterprise features.

Comparison table: Best Datadog LLM observability alternatives (2026)

| Tool | Best for | Core strength | Main limitation | CI/CD quality gates | Evals depth | Tracing/observability | Pricing model | Deployment/enterprise fit |

|---|---|---|---|---|---|---|---|---|

| Braintrust | Teams that need AI quality to determine release decisions | Connects evals, tracing, experiments, and production feedback in one workflow | Some enterprise controls are reserved for the Enterprise plan | Yes, native | Deep (offline evals, online scoring, LLM-as-a-judge, code scorers, human review) | Detailed tracing for multi-step LLM and agent workflows with production-to-eval linkage | Usage-based, no seat fees | Enterprise-ready with SSO, RBAC, hybrid deployment, HIPAA, and self-hosting options |

| LangSmith | Teams already building with LangChain or LangGraph | Native integration with the LangChain ecosystem for tracing and debugging | Best fit remains tied to LangChain-centric workflows | Via SDK | Good (datasets, annotation queues, prompt versioning) | LangChain- and LangGraph-native trace capture | Per-seat + per-trace | Enterprise tier available, but pricing scales with seats |

| Galileo | Teams that want packaged real-time guardrails | Fast built-in evaluators for production monitoring and protection | Less centered on iterative evaluation and release workflows | Via CI integration | Good (20+ built-in metrics) | Guardrail-focused monitoring and evaluation | Usage-based | Enterprise options available, including VPC and on-premises deployment |

| W&B Weave | Teams already standardized on Weights & Biases for ML workflows | Keeps LLM observability and evaluation inside the W&B system | Less mature for production AI quality workflows | Limited | Good (custom scorers, dataset testing) | Application trace visibility inside the W&B platform | Per-seat | Best fit for existing W&B users |

| Fiddler AI | Governance-heavy enterprises running ML and LLM systems together | Unified oversight across traditional ML and generative AI with explainability and compliance reporting | Governance layer may feel heavy for teams focused mainly on iteration speed | Limited | Good (100+ metrics) | Coverage across ML, LLM, and agent systems | Per-trace + enterprise | Built for regulated enterprises needing compliance, VPC, or on-premises deployment |

Ready to upgrade your LLM observability workflow? Start free with Braintrust.

How we chose the best Datadog alternatives

We evaluated Datadog alternatives across eight criteria.

Evals depth measures whether a platform supports LLM-as-a-judge scoring, custom-code scorers, human review, and side-by-side experiment comparisons as part of the core workflow.

CI/CD quality gates measure whether teams can block deployments when eval scores regress inside existing CI pipelines.

Tracing and observability cover span-level visibility into multi-step agent workflows, including tool calls, retrieval steps, and intermediate reasoning.

Production feedback loops evaluate how easily teams can convert production traces into reusable test cases and eval datasets without manual export or data wrangling.

Pricing model considers whether billing scales predictably with usage and whether seat-based pricing creates artificial bottlenecks as teams grow.

Enterprise fit covers deployment flexibility, including SaaS, VPC, and on-premises options, along with compliance certifications such as SOC 2 and HIPAA, and access controls such as SSO and RBAC.

Each alternative in this guide was assessed against the full set of criteria, with the strongest overall performer listed first.

Why Braintrust is the best Datadog alternative for AI quality

Datadog helps teams monitor LLM systems. Braintrust enables teams to evaluate output quality, review regressions, and prevent lower-quality changes from being released by using evaluation results directly in the release process.

Braintrust integrates production debugging, evaluation, and release review into a single workflow. Teams can investigate production failures, turn those failures into reusable evaluation cases, compare changes against earlier performance, and use evaluation results during pull request reviews. Engineering, product, and applied AI teams can review the same traces and evaluation results.

Every Braintrust plan includes unlimited users, so access to traces, evaluation results, and debugging context does not become more expensive as more teammates need visibility. Pricing scales with usage rather than headcount, which makes broader collaboration easier to support.

Companies like Notion, Zapier, Stripe, and Vercel run Braintrust in production. Notion reported going from fixing 3 issues per day to 30 after adopting Braintrust because converting production traces into evaluation datasets removed much of the manual work that had been slowing down debugging.

Want to see how Braintrust compares to your current setup? Start free today.

When Datadog is still a fine choice

Datadog remains a reasonable option for teams already standardized on it for infrastructure and application monitoring who want basic LLM visibility without adding another vendor. When reducing vendor count outweighs the need for deeper evaluation workflows, keeping LLM observability inside an existing Datadog contract can reduce operational overhead. Teams that mainly need dashboards, alerts, cost tracking, and infrastructure correlation for LLM applications, rather than a workflow for iterative AI quality improvement, may find Datadog's LLM Observability product sufficient.

FAQs: Best Datadog alternatives in 2026

Is Datadog enough for LLM observability?

Datadog covers core LLM observability needs, including tracing, cost tracking, dashboards, and infrastructure correlation. For teams that mainly want visibility into system behavior, Datadog can be sufficient. Teams that need structured evaluations, CI/CD quality gates, and a direct path from production issues to reusable test cases usually need a platform built for AI quality, such as Braintrust.

What is the best Datadog alternative for AI quality?

Braintrust is the strongest Datadog alternative for teams focused on AI quality because evaluation is built into the development and release workflow. Production traces can be turned into evaluation datasets, evaluation results can gate pull requests, and usage-based pricing with unlimited users avoids per-seat costs that grow linearly as more teammates need visibility.

Which Datadog alternative is best for LangChain teams?

LangSmith is the strongest fit for teams deeply committed to LangChain or LangGraph, as tracing and debugging are closely aligned with those ecosystems. Teams using multiple frameworks, or those that prioritize evaluation depth and release workflows over framework-specific debugging, should consider Braintrust as a better alternative.

Which Datadog alternative is best for enterprise governance?

Fiddler AI is a strong fit for enterprises where governance, explainability, and compliance reporting are primary requirements. Fiddler covers traditional ML and LLM workloads on a single platform and supports regulated deployment models. Teams that need evaluation depth, release control, and continuous AI quality improvement should choose Braintrust over a governance-heavy system centered on compliance reporting.