After instrumenting your application, Braintrust captures every request as traces that you can view, filter, and analyze in real time. This observability enables you to monitor production behavior, identify issues, and gather data for improving your application.Documentation Index

Fetch the complete documentation index at: https://braintrust.dev/docs/llms.txt

Use this file to discover all available pages before exploring further.

Why observe in Braintrust

Observability in Braintrust creates a feedback loop between production and evaluation. Logs use the same data structure as experiments, which means:- Instrumentation code works for both logging and evaluation

- Traces capture identical data in production and testing

- Scores and feedback apply to both logs and experiments

- Production data seamlessly becomes evaluation datasets

View your logs

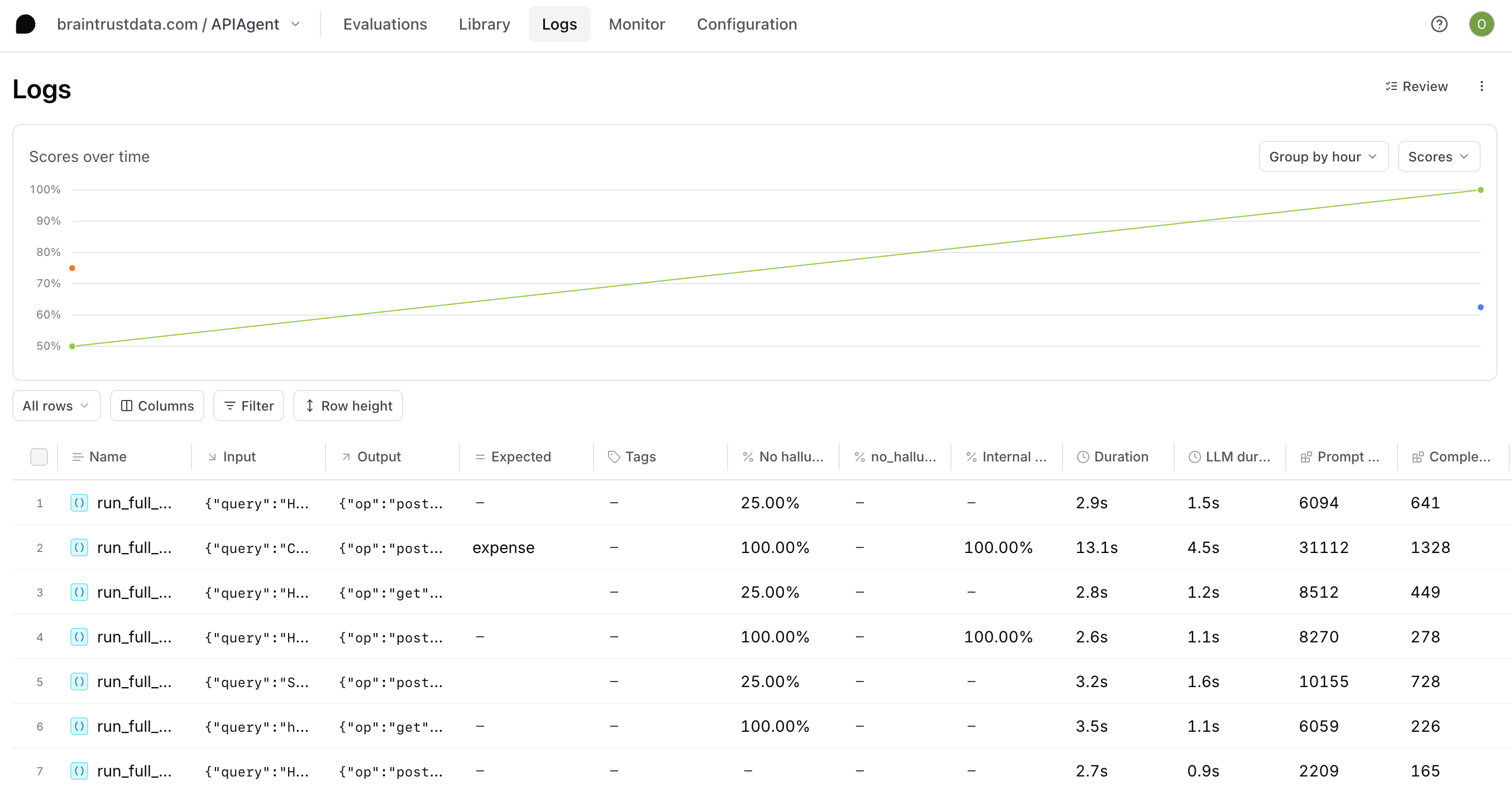

The Logs page displays all traces from your application in a searchable, filterable table. Each row represents a complete trace with its root span.

- Browse traces and individual spans

- Group related traces by metadata or tags

- Create custom columns to surface important values

- Extract prompts to iterate in playgrounds

- Apply tags to organize traces

Discover insights with Topics

Topics automatically analyze and classify your logs to surface patterns without manual review. Each trace is analyzed by facets that extract short labels, then similar labels are clustered into named topics like user intents, sentiment, and issues. Topics help you:- Surface user intents automatically

- Identify friction patterns across interactions

- Track sentiment trends over time

- Analyze recurring issues

Filter and search

Find specific traces using multiple approaches:- Filter menu: Quick filters and SQL queries for precise matching

- Deep search: Semantic search to find traces by meaning, not just keywords

- Loop: Ask natural language questions about your logs

- API: Programmatic access for automation

- CLI: Browse interactively or run SQL queries from the terminal with

bt view logsandbt sql

Monitor with dashboards

Custom dashboards aggregate metrics across your logs and experiments. Track request counts, latency, token usage, costs, scores, and custom metrics over time. Dashboards help you:- Visualize trends and anomalies

- Compare performance across time periods

- Drill into specific data points

- Share insights with your team

Use Loop

Loop is Braintrust’s AI agent that understands your data structure and helps you explore logs through natural language. Available on both the Logs page and individual trace pages, Loop lets you ask questions, identify patterns, and get insights without writing queries. See Analyze logs and Analyze individual traces for more details.Next steps

- View your logs in the Braintrust dashboard

- Discover insights with Topics

- Use Loop to analyze logs with natural language

- Filter and search for specific traces

- Use deep search for semantic queries

- Score online to evaluate production quality